The recommendation with Flux is to set CFG to 1 as shown in the stablediffusion-cpp README. Signed-off-by: Richard Palethorpe <io@richiejp.com> |

||

|---|---|---|

| .devcontainer | ||

| .devcontainer-scripts | ||

| .github | ||

| .vscode | ||

| aio | ||

| backend | ||

| configuration | ||

| core | ||

| custom-ca-certs | ||

| docs | ||

| examples | ||

| gallery | ||

| internal | ||

| models | ||

| pkg | ||

| prompt-templates | ||

| scripts | ||

| swagger | ||

| tests | ||

| .dockerignore | ||

| .editorconfig | ||

| .env | ||

| .gitattributes | ||

| .gitignore | ||

| .gitmodules | ||

| .yamllint | ||

| assets.go | ||

| CONTRIBUTING.md | ||

| docker-compose.yaml | ||

| Dockerfile | ||

| Dockerfile.aio | ||

| Earthfile | ||

| Entitlements.plist | ||

| entrypoint.sh | ||

| go.mod | ||

| go.sum | ||

| LICENSE | ||

| main.go | ||

| Makefile | ||

| README.md | ||

| renovate.json | ||

| SECURITY.md | ||

| webui_static.yaml | ||

💡 Get help - ❓FAQ 💭Discussions 💬 Discord 📖 Documentation website

💻 Quickstart 🖼️ Models 🚀 Roadmap 🥽 Demo 🌍 Explorer 🛫 Examples Try on

LocalAI is the free, Open Source OpenAI alternative. LocalAI act as a drop-in replacement REST API that's compatible with OpenAI (Elevenlabs, Anthropic... ) API specifications for local AI inferencing. It allows you to run LLMs, generate images, audio (and not only) locally or on-prem with consumer grade hardware, supporting multiple model families. Does not require GPU. It is created and maintained by Ettore Di Giacinto.

📚🆕 Local Stack Family

🆕 LocalAI is now part of a comprehensive suite of AI tools designed to work together:

|

LocalAGIA powerful Local AI agent management platform that serves as a drop-in replacement for OpenAI's Responses API, enhanced with advanced agentic capabilities. |

|

LocalRecallA REST-ful API and knowledge base management system that provides persistent memory and storage capabilities for AI agents. |

Screenshots

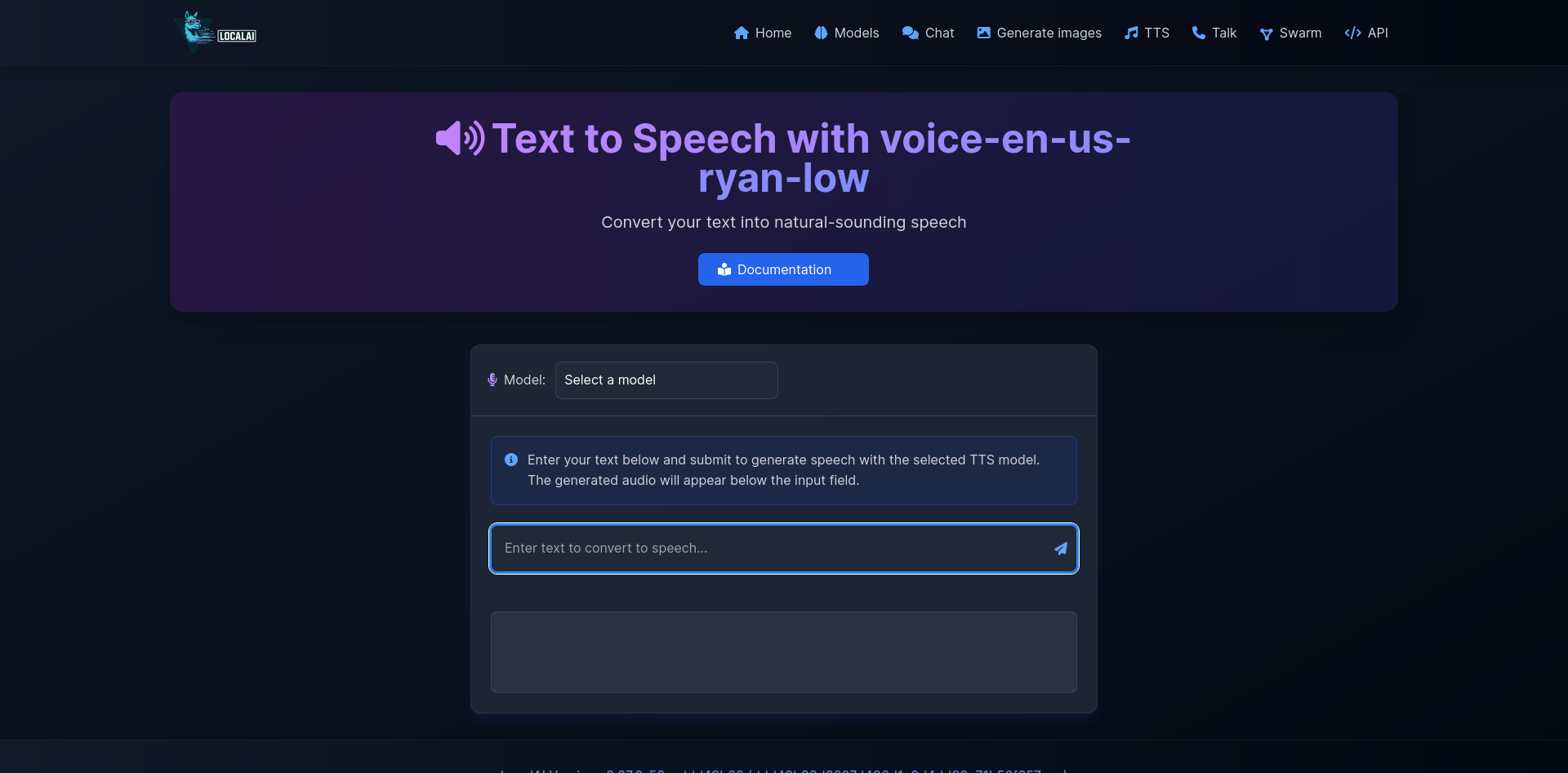

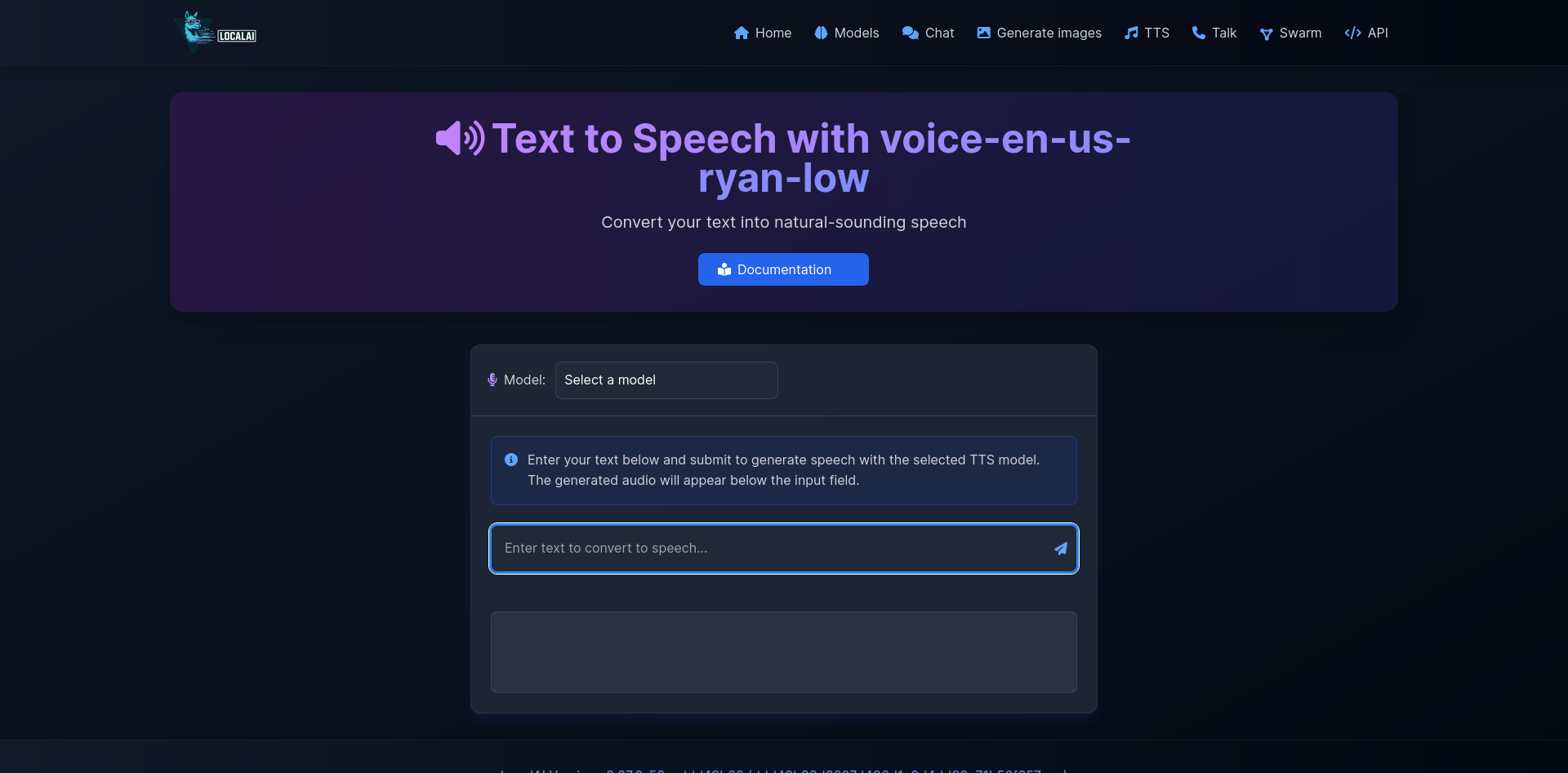

| Talk Interface | Generate Audio |

|---|---|

|

|

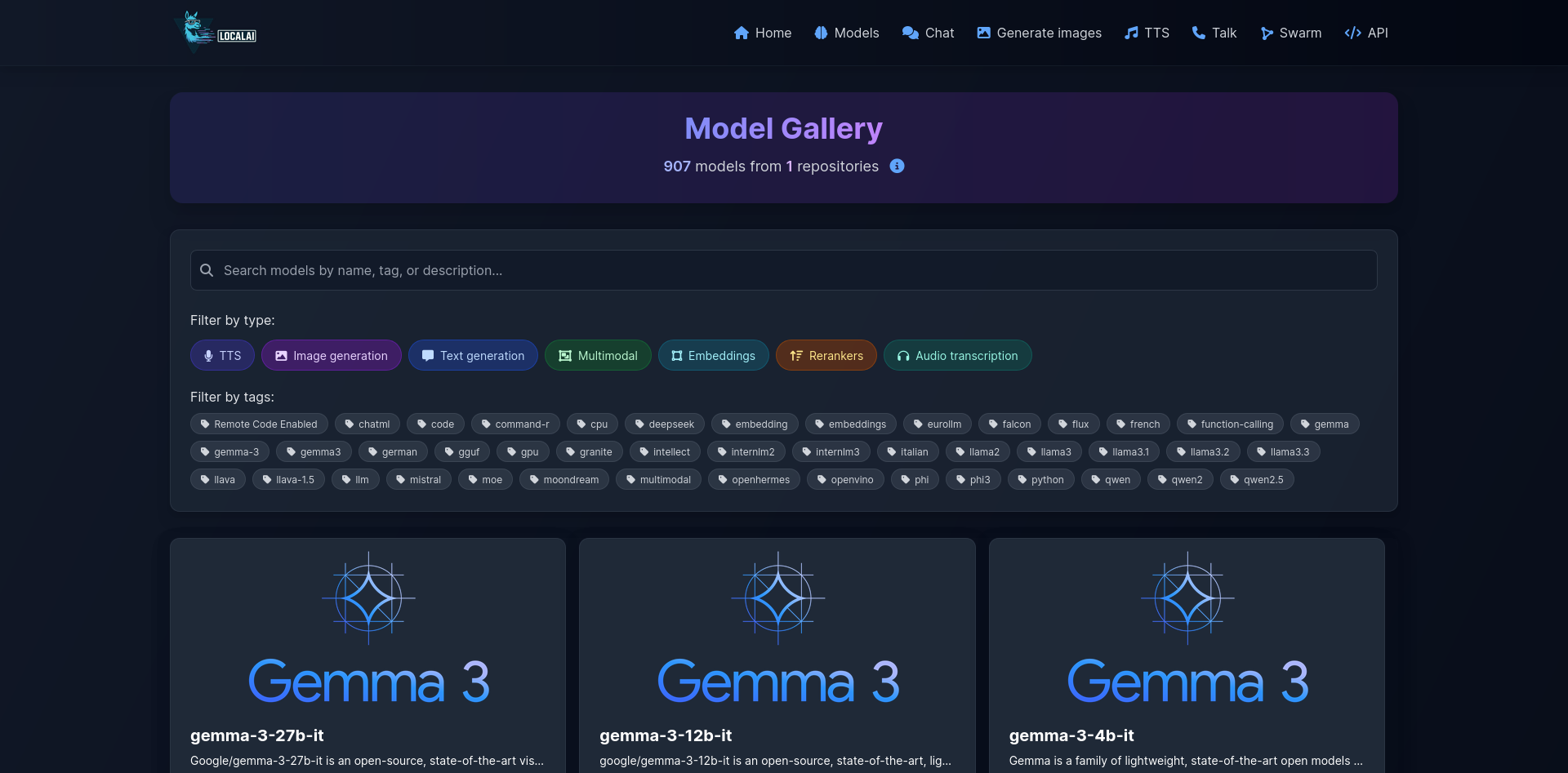

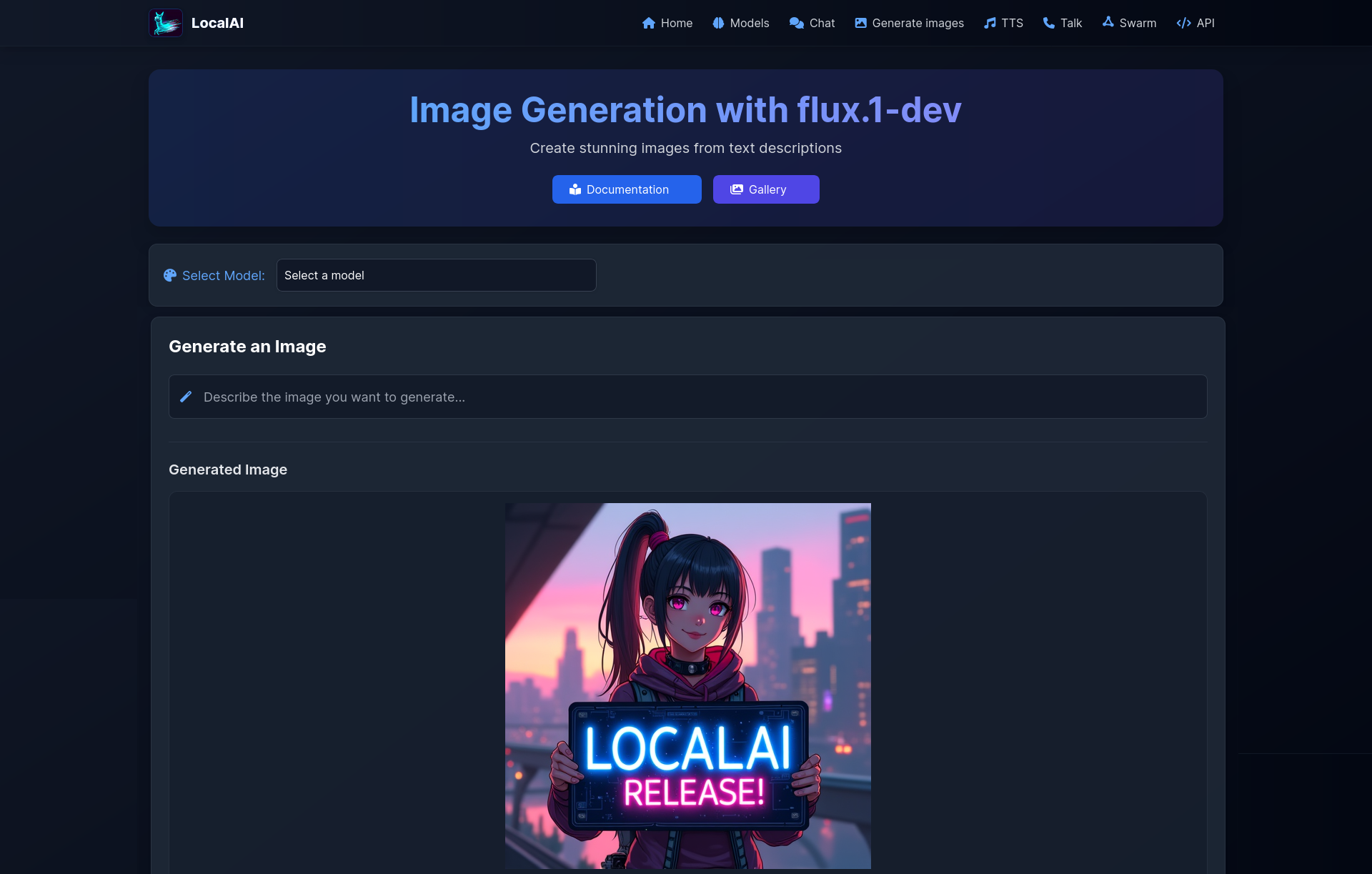

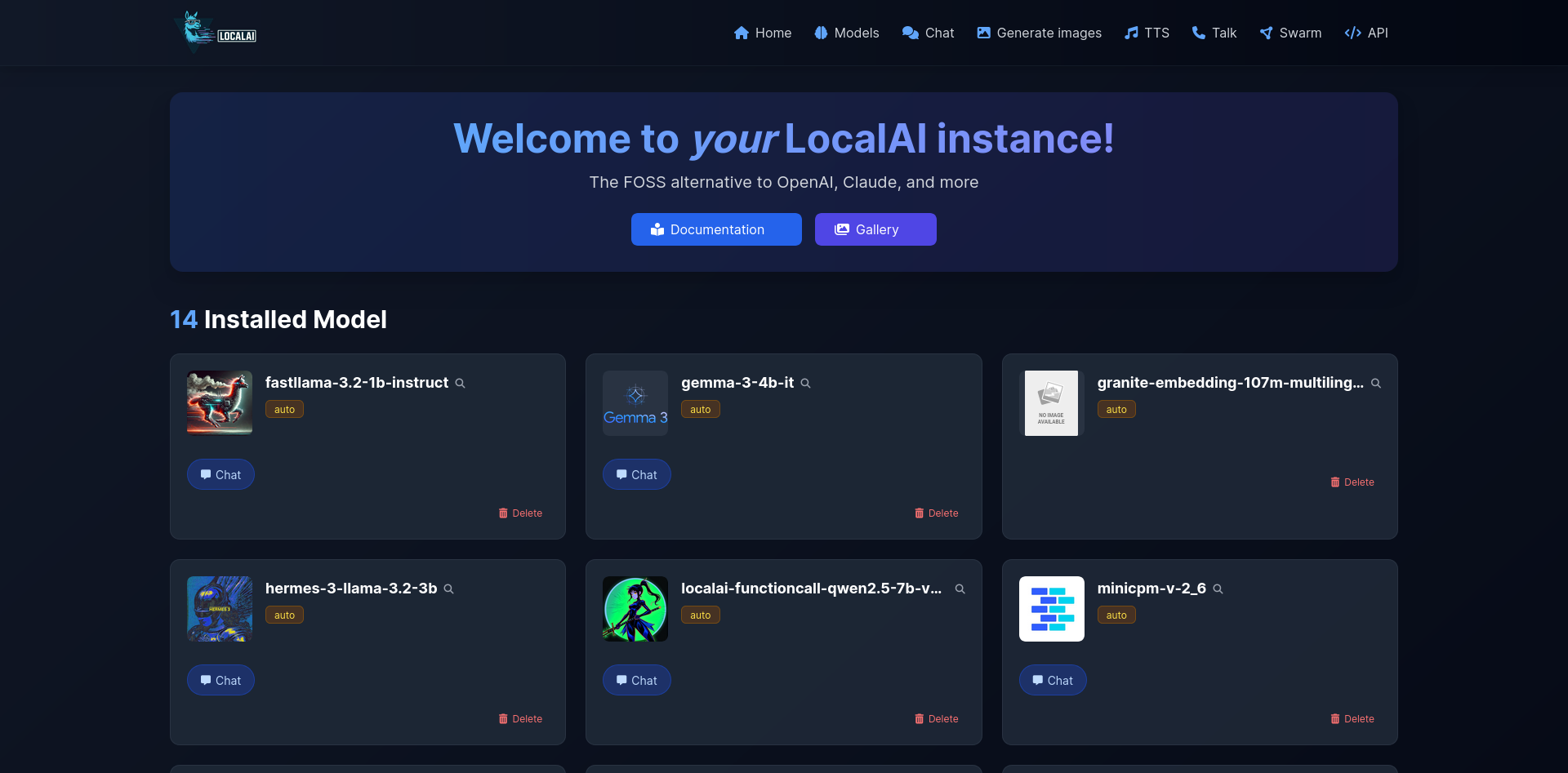

| Models Overview | Generate Images |

|---|---|

|

|

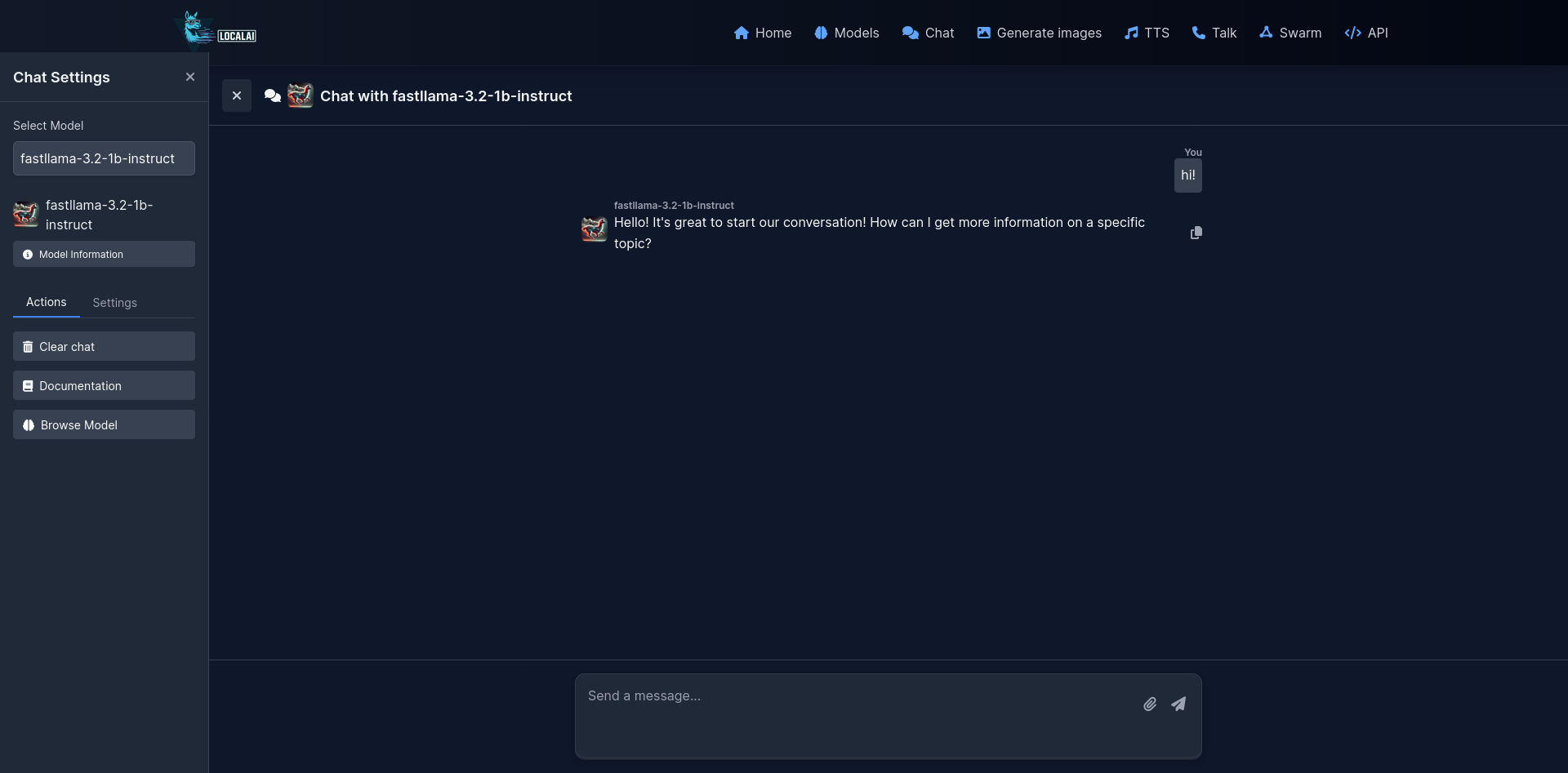

| Chat Interface | Home |

|---|---|

|

|

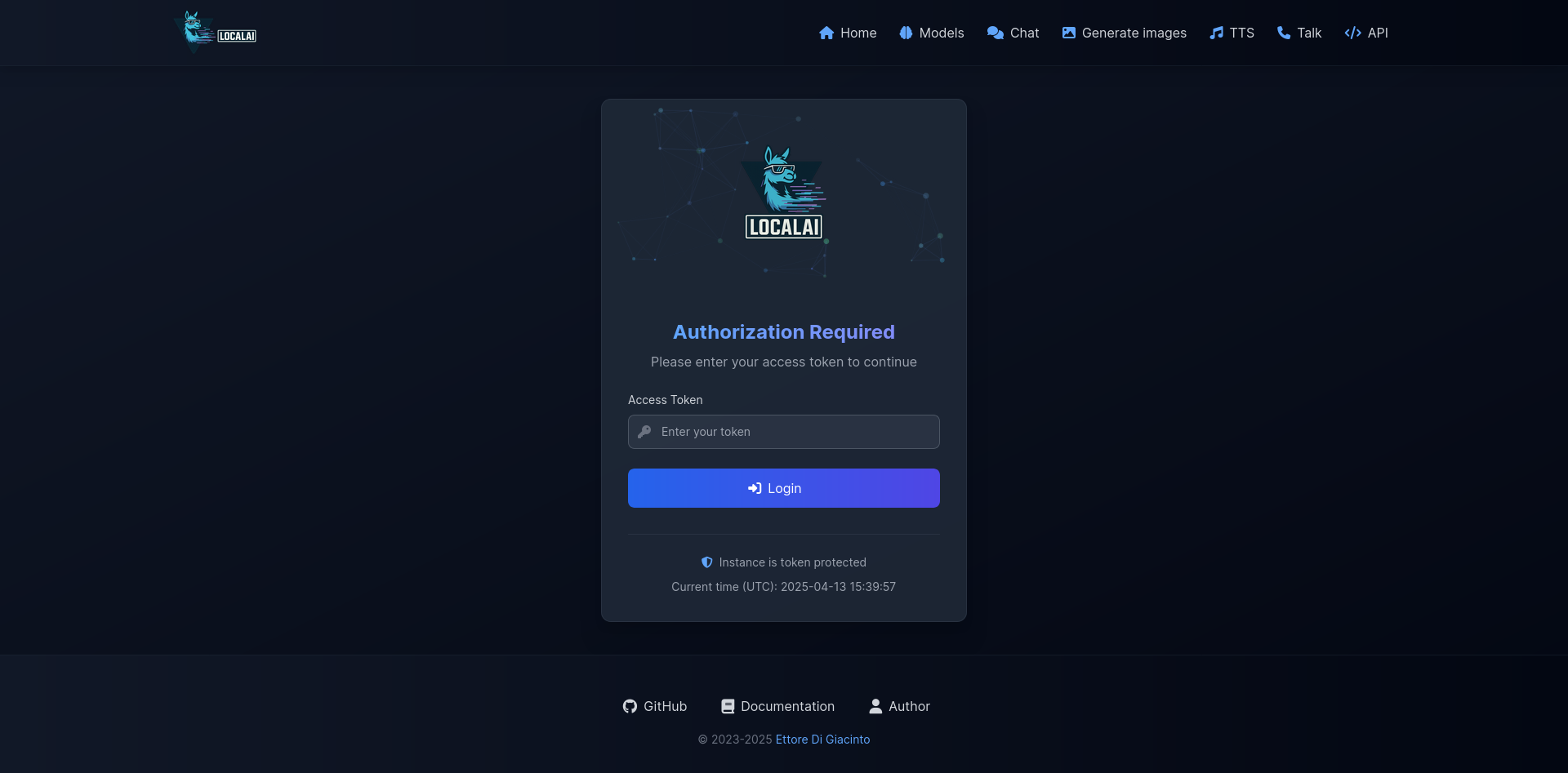

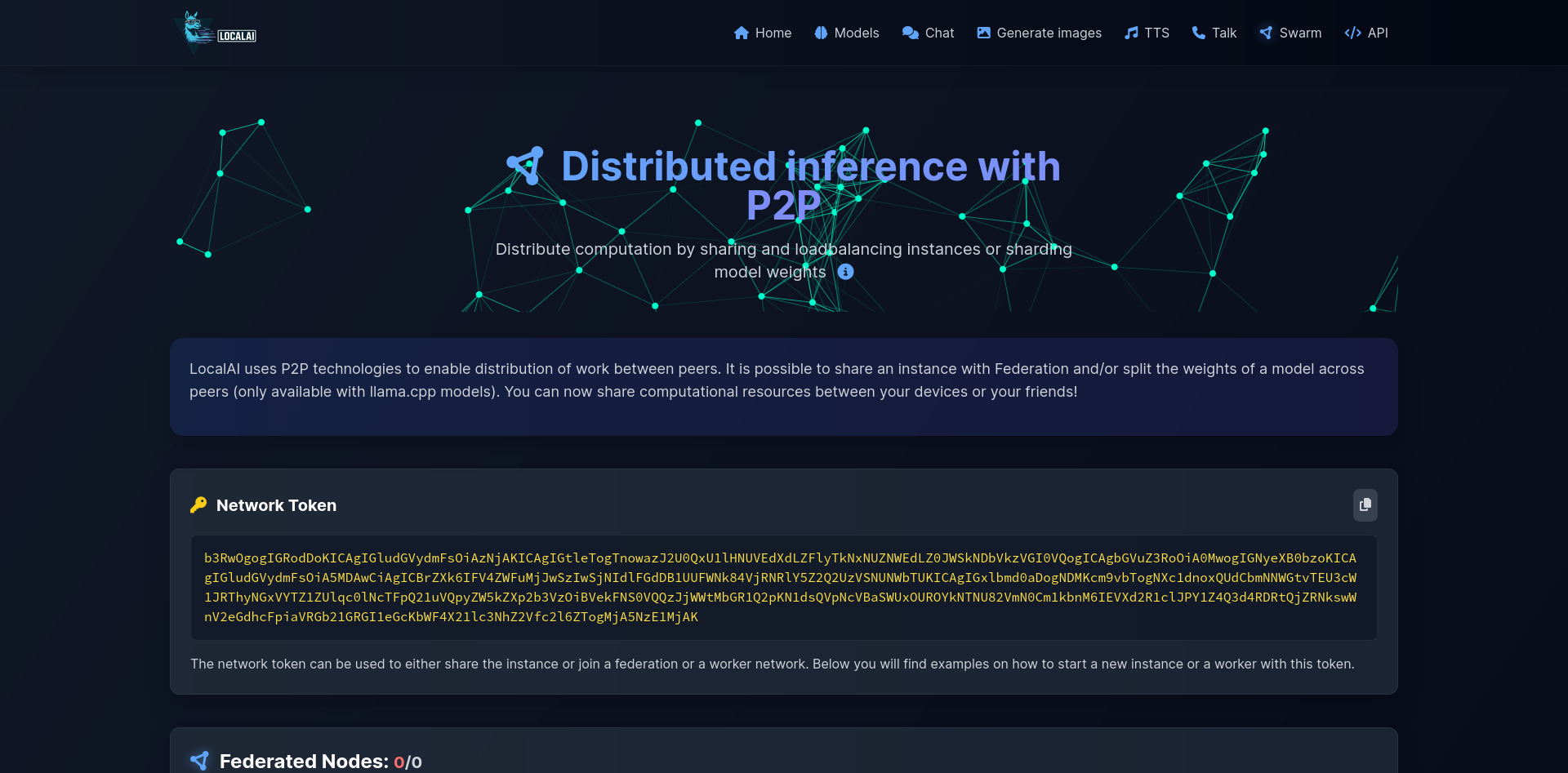

| Login | Swarm |

|---|---|

|

|

💻 Quickstart

Run the installer script:

# Basic installation

curl https://localai.io/install.sh | sh

For more installation options, see Installer Options.

Or run with docker:

CPU only image:

docker run -ti --name local-ai -p 8080:8080 localai/localai:latest

NVIDIA GPU Images:

# CUDA 12.0 with core features

docker run -ti --name local-ai -p 8080:8080 --gpus all localai/localai:latest-gpu-nvidia-cuda-12

# CUDA 12.0 with extra Python dependencies

docker run -ti --name local-ai -p 8080:8080 --gpus all localai/localai:latest-gpu-nvidia-cuda-12-extras

# CUDA 11.7 with core features

docker run -ti --name local-ai -p 8080:8080 --gpus all localai/localai:latest-gpu-nvidia-cuda-11

# CUDA 11.7 with extra Python dependencies

docker run -ti --name local-ai -p 8080:8080 --gpus all localai/localai:latest-gpu-nvidia-cuda-11-extras

# NVIDIA Jetson (L4T) ARM64

docker run -ti --name local-ai -p 8080:8080 --gpus all localai/localai:latest-nvidia-l4t-arm64

AMD GPU Images (ROCm):

# ROCm with core features

docker run -ti --name local-ai -p 8080:8080 --device=/dev/kfd --device=/dev/dri --group-add=video localai/localai:latest-gpu-hipblas

# ROCm with extra Python dependencies

docker run -ti --name local-ai -p 8080:8080 --device=/dev/kfd --device=/dev/dri --group-add=video localai/localai:latest-gpu-hipblas-extras

Intel GPU Images (oneAPI):

# Intel GPU with FP16 support

docker run -ti --name local-ai -p 8080:8080 localai/localai:latest-gpu-intel-f16

# Intel GPU with FP16 support and extra dependencies

docker run -ti --name local-ai -p 8080:8080 localai/localai:latest-gpu-intel-f16-extras

# Intel GPU with FP32 support

docker run -ti --name local-ai -p 8080:8080 localai/localai:latest-gpu-intel-f32

# Intel GPU with FP32 support and extra dependencies

docker run -ti --name local-ai -p 8080:8080 localai/localai:latest-gpu-intel-f32-extras

Vulkan GPU Images:

# Vulkan with core features

docker run -ti --name local-ai -p 8080:8080 localai/localai:latest-gpu-vulkan

AIO Images (pre-downloaded models):

# CPU version

docker run -ti --name local-ai -p 8080:8080 localai/localai:latest-aio-cpu

# NVIDIA CUDA 12 version

docker run -ti --name local-ai -p 8080:8080 --gpus all localai/localai:latest-aio-gpu-nvidia-cuda-12

# NVIDIA CUDA 11 version

docker run -ti --name local-ai -p 8080:8080 --gpus all localai/localai:latest-aio-gpu-nvidia-cuda-11

# Intel GPU version

docker run -ti --name local-ai -p 8080:8080 localai/localai:latest-aio-gpu-intel-f16

# AMD GPU version

docker run -ti --name local-ai -p 8080:8080 --device=/dev/kfd --device=/dev/dri --group-add=video localai/localai:latest-aio-gpu-hipblas

For more information about the AIO images and pre-downloaded models, see Container Documentation.

To load models:

# From the model gallery (see available models with `local-ai models list`, in the WebUI from the model tab, or visiting https://models.localai.io)

local-ai run llama-3.2-1b-instruct:q4_k_m

# Start LocalAI with the phi-2 model directly from huggingface

local-ai run huggingface://TheBloke/phi-2-GGUF/phi-2.Q8_0.gguf

# Install and run a model from the Ollama OCI registry

local-ai run ollama://gemma:2b

# Run a model from a configuration file

local-ai run https://gist.githubusercontent.com/.../phi-2.yaml

# Install and run a model from a standard OCI registry (e.g., Docker Hub)

local-ai run oci://localai/phi-2:latest

For more information, see 💻 Getting started

📰 Latest project news

- Apr 2025: LocalAGI and LocalRecall join the LocalAI family stack.

- Apr 2025: WebUI overhaul, AIO images updates

- Feb 2025: Backend cleanup, Breaking changes, new backends (kokoro, OutelTTS, faster-whisper), Nvidia L4T images

- Jan 2025: LocalAI model release: https://huggingface.co/mudler/LocalAI-functioncall-phi-4-v0.3, SANA support in diffusers: https://github.com/mudler/LocalAI/pull/4603

- Dec 2024: stablediffusion.cpp backend (ggml) added ( https://github.com/mudler/LocalAI/pull/4289 )

- Nov 2024: Bark.cpp backend added ( https://github.com/mudler/LocalAI/pull/4287 )

- Nov 2024: Voice activity detection models (VAD) added to the API: https://github.com/mudler/LocalAI/pull/4204

- Oct 2024: examples moved to LocalAI-examples

- Aug 2024: 🆕 FLUX-1, P2P Explorer

- July 2024: 🔥🔥 🆕 P2P Dashboard, LocalAI Federated mode and AI Swarms: https://github.com/mudler/LocalAI/pull/2723. P2P Global community pools: https://github.com/mudler/LocalAI/issues/3113

- May 2024: 🔥🔥 Decentralized P2P llama.cpp: https://github.com/mudler/LocalAI/pull/2343 (peer2peer llama.cpp!) 👉 Docs https://localai.io/features/distribute/

- May 2024: 🔥🔥 Distributed inferencing: https://github.com/mudler/LocalAI/pull/2324

- April 2024: Reranker API: https://github.com/mudler/LocalAI/pull/2121

Roadmap items: List of issues

🚀 Features

- 📖 Text generation with GPTs (

llama.cpp,transformers,vllm... 📖 and more) - 🗣 Text to Audio

- 🔈 Audio to Text (Audio transcription with

whisper.cpp) - 🎨 Image generation

- 🔥 OpenAI-alike tools API

- 🧠 Embeddings generation for vector databases

- ✍️ Constrained grammars

- 🖼️ Download Models directly from Huggingface

- 🥽 Vision API

- 📈 Reranker API

- 🆕🖧 P2P Inferencing

- Agentic capabilities

- 🔊 Voice activity detection (Silero-VAD support)

- 🌍 Integrated WebUI!

🔗 Community and integrations

Build and deploy custom containers:

WebUIs:

- https://github.com/Jirubizu/localai-admin

- https://github.com/go-skynet/LocalAI-frontend

- QA-Pilot(An interactive chat project that leverages LocalAI LLMs for rapid understanding and navigation of GitHub code repository) https://github.com/reid41/QA-Pilot

Model galleries

Other:

- Helm chart https://github.com/go-skynet/helm-charts

- VSCode extension https://github.com/badgooooor/localai-vscode-plugin

- Langchain: https://python.langchain.com/docs/integrations/providers/localai/

- Terminal utility https://github.com/djcopley/ShellOracle

- Local Smart assistant https://github.com/mudler/LocalAGI

- Home Assistant https://github.com/sammcj/homeassistant-localai / https://github.com/drndos/hass-openai-custom-conversation / https://github.com/valentinfrlch/ha-gpt4vision

- Discord bot https://github.com/mudler/LocalAGI/tree/main/examples/discord

- Slack bot https://github.com/mudler/LocalAGI/tree/main/examples/slack

- Shell-Pilot(Interact with LLM using LocalAI models via pure shell scripts on your Linux or MacOS system) https://github.com/reid41/shell-pilot

- Telegram bot https://github.com/mudler/LocalAI/tree/master/examples/telegram-bot

- Another Telegram Bot https://github.com/JackBekket/Hellper

- Auto-documentation https://github.com/JackBekket/Reflexia

- Github bot which answer on issues, with code and documentation as context https://github.com/JackBekket/GitHelper

- Github Actions: https://github.com/marketplace/actions/start-localai

- Examples: https://github.com/mudler/LocalAI/tree/master/examples/

🔗 Resources

- LLM finetuning guide

- How to build locally

- How to install in Kubernetes

- Projects integrating LocalAI

- How tos section (curated by our community)

📖 🎥 Media, Blogs, Social

- Run Visual studio code with LocalAI (SUSE)

- 🆕 Run LocalAI on Jetson Nano Devkit

- Run LocalAI on AWS EKS with Pulumi

- Run LocalAI on AWS

- Create a slackbot for teams and OSS projects that answer to documentation

- LocalAI meets k8sgpt

- Question Answering on Documents locally with LangChain, LocalAI, Chroma, and GPT4All

- Tutorial to use k8sgpt with LocalAI

Citation

If you utilize this repository, data in a downstream project, please consider citing it with:

@misc{localai,

author = {Ettore Di Giacinto},

title = {LocalAI: The free, Open source OpenAI alternative},

year = {2023},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/go-skynet/LocalAI}},

❤️ Sponsors

Do you find LocalAI useful?

Support the project by becoming a backer or sponsor. Your logo will show up here with a link to your website.

A huge thank you to our generous sponsors who support this project covering CI expenses, and our Sponsor list:

🌟 Star history

📖 License

LocalAI is a community-driven project created by Ettore Di Giacinto.

MIT - Author Ettore Di Giacinto mudler@localai.io

🙇 Acknowledgements

LocalAI couldn't have been built without the help of great software already available from the community. Thank you!

- llama.cpp

- https://github.com/tatsu-lab/stanford_alpaca

- https://github.com/cornelk/llama-go for the initial ideas

- https://github.com/antimatter15/alpaca.cpp

- https://github.com/EdVince/Stable-Diffusion-NCNN

- https://github.com/ggerganov/whisper.cpp

- https://github.com/rhasspy/piper

🤗 Contributors

This is a community project, a special thanks to our contributors! 🤗