mirror of

https://github.com/mudler/LocalAI.git

synced 2025-05-20 10:35:01 +00:00

docs: Initial import from localai-website (#1312)

Signed-off-by: Ettore Di Giacinto <mudler@localai.io>

This commit is contained in:

parent

763f94ca80

commit

c5c77d2b0d

66 changed files with 6111 additions and 0 deletions

70

docs/content/integrations/AnythingLLM.md

Normal file

70

docs/content/integrations/AnythingLLM.md

Normal file

|

|

@ -0,0 +1,70 @@

|

|||

|

||||

+++

|

||||

disableToc = false

|

||||

title = "AnythingLLM"

|

||||

description="Integrate your LocalAI LLM and embedding models into AnythingLLM by Mintplex Labs"

|

||||

weight = 2

|

||||

+++

|

||||

|

||||

AnythingLLM is an open source ChatGPT equivalent tool for chatting with documents and more in a secure environment by [Mintplex Labs Inc](https://github.com/Mintplex-Labs).

|

||||

|

||||

|

||||

|

||||

⭐ Star on Github - https://github.com/Mintplex-Labs/anything-llm

|

||||

|

||||

* Chat with your LocalAI models (or hosted models like OpenAi, Anthropic, and Azure)

|

||||

* Embed documents (txt, pdf, json, and more) using your LocalAI Sentence Transformers

|

||||

* Select any vector database you want (Chroma, Pinecone, qDrant, Weaviate ) or use the embedded on-instance vector database (LanceDB)

|

||||

* Supports single or multi-user tenancy with built-in permissions

|

||||

* Full developer API

|

||||

* Locally running SQLite db for minimal setup.

|

||||

|

||||

AnythingLLM is a fully transparent tool to deliver a customized, white-label ChatGPT equivalent experience using only the models and services you or your organization are comfortable using.

|

||||

|

||||

### Why AnythingLLM?

|

||||

|

||||

AnythingLLM aims to enable you to quickly and comfortably get a ChatGPT equivalent experience using your proprietary documents for your organization with zero compromise on security or comfort.

|

||||

|

||||

### What does AnythingLLM include?

|

||||

- Full UI

|

||||

- Full admin console and panel for managing users, chats, model selection, vector db connection, and embedder selection

|

||||

- Multi-user support and logins

|

||||

- Supports both desktop and mobile view ports

|

||||

- Built in vector database where no data leaves your instance at all

|

||||

- Docker support

|

||||

|

||||

## Install

|

||||

|

||||

### Local via docker

|

||||

|

||||

Running via docker and integrating with your LocalAI instance is a breeze.

|

||||

|

||||

First, pull in the latest AnythingLLM Docker image

|

||||

`docker pull mintplexlabs/anythingllm:master`

|

||||

|

||||

Next, run the image on a container exposing port `3001`.

|

||||

`docker run -d -p 3001:3001 mintplexlabs/anythingllm:master`

|

||||

|

||||

Now open `http://localhost:3001` and you will start on-boarding for setting up your AnythingLLM instance to your comfort level

|

||||

|

||||

|

||||

## Integration with your LocalAI instance.

|

||||

|

||||

There are two areas where you can leverage your models loaded into LocalAI - LLM and Embedding. Any LLM models should be ready to run a chat completion.

|

||||

|

||||

### LLM model selection

|

||||

|

||||

During onboarding and from the sidebar setting you can select `LocalAI` as your LLM. Here you can set both the model and token limit of the specific model. The dropdown will automatically populate once your url is set.

|

||||

|

||||

The URL should look like `http://localhost:8000/v1` or wherever your LocalAI instance is being served from. Non-localhost URLs are permitted if hosting LocalAI on cloud services.

|

||||

|

||||

|

||||

|

||||

|

||||

### LLM embedding model selection

|

||||

|

||||

During onboarding and from the sidebar setting you can select `LocalAI` as your preferred embedding engine. This model will be the model used when you upload any kind of document via AnythingLLM. Here you can set the model from available models via the LocalAI API. The dropdown will automatically populate once your url is set.

|

||||

|

||||

The URL should look like `http://localhost:8000/v1` or wherever your LocalAI instance is being served from. Non-localhost URLs are permitted if hosting LocalAI on cloud services.

|

||||

|

||||

|

||||

58

docs/content/integrations/BMO-Chatbot.md

Normal file

58

docs/content/integrations/BMO-Chatbot.md

Normal file

|

|

@ -0,0 +1,58 @@

|

|||

|

||||

+++

|

||||

disableToc = false

|

||||

title = "BMO Chatbo"

|

||||

weight = 2

|

||||

+++

|

||||

|

||||

Generate and brainstorm ideas while creating your notes using Large Language Models (LLMs) such as OpenAI's "gpt-3.5-turbo" and "gpt-4" for Obsidian.

|

||||

|

||||

|

||||

|

||||

Github Link - https://github.com/longy2k/obsidian-bmo-chatbot

|

||||

|

||||

## Features

|

||||

- **Chat from anywhere in Obsidian:** Chat with your bot from anywhere within Obsidian.

|

||||

- **Chat with current note:** Use your chatbot to reference and engage within your current note.

|

||||

- **Chatbot responds in Markdown:** Receive formatted responses in Markdown for consistency.

|

||||

- **Customizable bot name:** Personalize the chatbot's name.

|

||||

- **System role prompt:** Configure the chatbot to prompt for user roles before responding to messages.

|

||||

- **Set Max Tokens and Temperature:** Customize the length and randomness of the chatbot's responses with Max Tokens and Temperature settings.

|

||||

- **System theme color accents:** Seamlessly matches the chatbot's interface with your system's color scheme.

|

||||

- **Interact with self-hosted Large Language Models (LLMs):** Use the REST API URL provided to interact with self-hosted Large Language Models (LLMs) using [LocalAI](https://localai.io/howtos/).

|

||||

|

||||

## Requirements

|

||||

To use this plugin, with [LocalAI](https://localai.io/howtos/), you will need to have the self-hosted API set up and running. You can follow the instructions provided by the self-hosted API provider to get it up and running.

|

||||

Once you have the REST API URL for your self-hosted API, you can use it with this plugin to interact with your models.

|

||||

Explore some ``GGUF`` models at [theBloke](https://huggingface.co/TheBloke).

|

||||

|

||||

## How to activate the plugin

|

||||

Two methods:

|

||||

|

||||

Obsidian Community plugins (**Recommended**):

|

||||

1. Search for "BMO Chatbot" in the Obsidian Community plugins.

|

||||

2. Enable "BMO Chatbot" in the settings.

|

||||

|

||||

To activate the plugin from this repo:

|

||||

1. Navigate to the plugin's folder in your terminal.

|

||||

2. Run `npm install` to install any necessary dependencies for the plugin.

|

||||

3. Once the dependencies have been installed, run `npm run build` to build the plugin.

|

||||

4. Once the plugin has been built, it should be ready to activate.

|

||||

|

||||

## Getting Started

|

||||

To start using the plugin, enable it in your settings menu and enter your OpenAPI key. After completing these steps, you can access the bot panel by clicking on the bot icon in the left sidebar.

|

||||

If you want to clear the chat history, simply click on the bot icon again in the left ribbon bar.

|

||||

|

||||

## Supported Models

|

||||

- OpenAI

|

||||

- gpt-3.5-turbo

|

||||

- gpt-3.5-turbo-16k

|

||||

- gpt-4

|

||||

- Anthropic

|

||||

- claude-instant-1.2

|

||||

- claude-2.0

|

||||

- Any self-hosted models using [LocalAI](https://localai.io/howtos/)

|

||||

|

||||

## Other Notes

|

||||

"BMO" is a tag name for this project, inspired by the character BMO from the animated TV show "Adventure Time."

|

||||

|

||||

103

docs/content/integrations/Bionic-GPT.md

Normal file

103

docs/content/integrations/Bionic-GPT.md

Normal file

|

|

@ -0,0 +1,103 @@

|

|||

|

||||

+++

|

||||

disableToc = false

|

||||

title = "BionicGPT"

|

||||

weight = 2

|

||||

+++

|

||||

|

||||

an on-premise replacement for ChatGPT, offering the advantages of Generative AI while maintaining strict data confidentiality, BionicGPT can run on your laptop or scale into the data center.

|

||||

|

||||

|

||||

|

||||

BionicGPT Homepage - https://bionic-gpt.com

|

||||

Github link - https://github.com/purton-tech/bionicgpt

|

||||

|

||||

<!-- Try it out -->

|

||||

## Try it out

|

||||

Cut and paste the following into a `docker-compose.yaml` file and run `docker-compose up -d` access the user interface on http://localhost:7800/auth/sign_up

|

||||

This has been tested on an AMD 2700x with 16GB of ram. The included `ggml-gpt4all-j` model runs on CPU only.

|

||||

**Warning** - The images in this `docker-compose` are large due to having the model weights pre-loaded for convenience.

|

||||

|

||||

```yaml

|

||||

services:

|

||||

|

||||

# LocalAI with pre-loaded ggml-gpt4all-j

|

||||

local-ai:

|

||||

image: ghcr.io/purton-tech/bionicgpt-model-api:llama-2-7b-chat

|

||||

|

||||

# Handles parsing of multiple documents types.

|

||||

unstructured:

|

||||

image: downloads.unstructured.io/unstructured-io/unstructured-api:db264d8

|

||||

ports:

|

||||

- "8000:8000"

|

||||

|

||||

# Handles routing between the application, barricade and the LLM API

|

||||

envoy:

|

||||

image: ghcr.io/purton-tech/bionicgpt-envoy:1.1.10

|

||||

ports:

|

||||

- "7800:7700"

|

||||

|

||||

# Postgres pre-loaded with pgVector

|

||||

db:

|

||||

image: ankane/pgvector

|

||||

environment:

|

||||

POSTGRES_PASSWORD: testpassword

|

||||

POSTGRES_USER: postgres

|

||||

POSTGRES_DB: finetuna

|

||||

healthcheck:

|

||||

test: ["CMD-SHELL", "pg_isready -U postgres"]

|

||||

interval: 10s

|

||||

timeout: 5s

|

||||

retries: 5

|

||||

|

||||

# Sets up our database tables

|

||||

migrations:

|

||||

image: ghcr.io/purton-tech/bionicgpt-db-migrations:1.1.10

|

||||

environment:

|

||||

DATABASE_URL: postgresql://postgres:testpassword@db:5432/postgres?sslmode=disable

|

||||

depends_on:

|

||||

db:

|

||||

condition: service_healthy

|

||||

|

||||

# Barricade handles all /auth routes for user sign up and sign in.

|

||||

barricade:

|

||||

image: purtontech/barricade

|

||||

environment:

|

||||

# This secret key is used to encrypt cookies.

|

||||

SECRET_KEY: 190a5bf4b3cbb6c0991967ab1c48ab30790af876720f1835cbbf3820f4f5d949

|

||||

DATABASE_URL: postgresql://postgres:testpassword@db:5432/postgres?sslmode=disable

|

||||

FORWARD_URL: app

|

||||

FORWARD_PORT: 7703

|

||||

REDIRECT_URL: /app/post_registration

|

||||

depends_on:

|

||||

db:

|

||||

condition: service_healthy

|

||||

migrations:

|

||||

condition: service_completed_successfully

|

||||

|

||||

# Our axum server delivering our user interface

|

||||

embeddings-job:

|

||||

image: ghcr.io/purton-tech/bionicgpt-embeddings-job:1.1.10

|

||||

environment:

|

||||

APP_DATABASE_URL: postgresql://ft_application:testpassword@db:5432/postgres?sslmode=disable

|

||||

depends_on:

|

||||

db:

|

||||

condition: service_healthy

|

||||

migrations:

|

||||

condition: service_completed_successfully

|

||||

|

||||

# Our axum server delivering our user interface

|

||||

app:

|

||||

image: ghcr.io/purton-tech/bionicgpt:1.1.10

|

||||

environment:

|

||||

APP_DATABASE_URL: postgresql://ft_application:testpassword@db:5432/postgres?sslmode=disable

|

||||

depends_on:

|

||||

db:

|

||||

condition: service_healthy

|

||||

migrations:

|

||||

condition: service_completed_successfully

|

||||

```

|

||||

|

||||

## Kubernetes Ready

|

||||

|

||||

BionicGPT is optimized to run on Kubernetes and implements the full pipeline of LLM fine tuning from data acquisition to user interface.

|

||||

54

docs/content/integrations/Flowise.md

Normal file

54

docs/content/integrations/Flowise.md

Normal file

|

|

@ -0,0 +1,54 @@

|

|||

|

||||

+++

|

||||

disableToc = false

|

||||

title = "Flowise"

|

||||

weight = 2

|

||||

+++

|

||||

|

||||

Build LLM Apps Easily

|

||||

|

||||

|

||||

|

||||

Github Link - https://github.com/FlowiseAI/Flowise

|

||||

|

||||

## ⚡Local Install

|

||||

|

||||

Download and Install [NodeJS](https://nodejs.org/en/download) >= 18.15.0

|

||||

|

||||

1. Install Flowise

|

||||

```bash

|

||||

npm install -g flowise

|

||||

```

|

||||

2. Start Flowise

|

||||

|

||||

```bash

|

||||

npx flowise start

|

||||

```

|

||||

|

||||

3. Open [http://localhost:3000](http://localhost:3000)

|

||||

|

||||

## 🐳 Docker

|

||||

|

||||

### Docker Compose

|

||||

|

||||

1. Go to `docker` folder at the root of the project

|

||||

2. Copy `.env.example` file, paste it into the same location, and rename to `.env`

|

||||

3. `docker-compose up -d`

|

||||

4. Open [http://localhost:3000](http://localhost:3000)

|

||||

5. You can bring the containers down by `docker-compose stop --rmi all`

|

||||

|

||||

### Docker Compose (Flowise + LocalAI)

|

||||

|

||||

1. In a command line Run ``git clone https://github.com/go-skynet/LocalAI``

|

||||

2. Then run ``cd LocalAI/examples/flowise``

|

||||

3. Then run ``docker-compose up -d --pull always``

|

||||

4. Open [http://localhost:3000](http://localhost:3000)

|

||||

5. You can bring the containers down by `docker-compose stop --rmi all`

|

||||

|

||||

## 🌱 Env Variables

|

||||

|

||||

Flowise support different environment variables to configure your instance. You can specify the following variables in the `.env` file inside `packages/server` folder. Read [more](https://github.com/FlowiseAI/Flowise/blob/main/CONTRIBUTING.md#-env-variables)

|

||||

|

||||

## 📖 Documentation

|

||||

|

||||

[Flowise Docs](https://docs.flowiseai.com/)

|

||||

466

docs/content/integrations/K8sGPT.md

Normal file

466

docs/content/integrations/K8sGPT.md

Normal file

|

|

@ -0,0 +1,466 @@

|

|||

|

||||

+++

|

||||

disableToc = false

|

||||

title = "k8sgpt"

|

||||

weight = 2

|

||||

+++

|

||||

|

||||

a tool for scanning your Kubernetes clusters, diagnosing, and triaging issues in simple English.

|

||||

|

||||

|

||||

|

||||

It has SRE experience codified into its analyzers and helps to pull out the most relevant information to enrich it with AI.

|

||||

|

||||

Github Link - https://github.com/k8sgpt-ai/k8sgpt

|

||||

|

||||

## CLI Installation

|

||||

|

||||

### Linux/Mac via brew

|

||||

|

||||

```

|

||||

brew tap k8sgpt-ai/k8sgpt

|

||||

brew install k8sgpt

|

||||

```

|

||||

|

||||

<details>

|

||||

<summary>RPM-based installation (RedHat/CentOS/Fedora)</summary>

|

||||

|

||||

**32 bit:**

|

||||

<!---x-release-please-start-version-->

|

||||

```

|

||||

curl -LO https://github.com/k8sgpt-ai/k8sgpt/releases/download/v0.3.18/k8sgpt_386.rpm

|

||||

sudo rpm -ivh k8sgpt_386.rpm

|

||||

```

|

||||

<!---x-release-please-end-->

|

||||

|

||||

**64 bit:**

|

||||

|

||||

<!---x-release-please-start-version-->

|

||||

```

|

||||

curl -LO https://github.com/k8sgpt-ai/k8sgpt/releases/download/v0.3.18/k8sgpt_amd64.rpm

|

||||

sudo rpm -ivh -i k8sgpt_amd64.rpm

|

||||

```

|

||||

<!---x-release-please-end-->

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary>DEB-based installation (Ubuntu/Debian)</summary>

|

||||

|

||||

**32 bit:**

|

||||

<!---x-release-please-start-version-->

|

||||

```

|

||||

curl -LO https://github.com/k8sgpt-ai/k8sgpt/releases/download/v0.3.18/k8sgpt_386.deb

|

||||

sudo dpkg -i k8sgpt_386.deb

|

||||

```

|

||||

<!---x-release-please-end-->

|

||||

**64 bit:**

|

||||

|

||||

<!---x-release-please-start-version-->

|

||||

```

|

||||

curl -LO https://github.com/k8sgpt-ai/k8sgpt/releases/download/v0.3.18/k8sgpt_amd64.deb

|

||||

sudo dpkg -i k8sgpt_amd64.deb

|

||||

```

|

||||

<!---x-release-please-end-->

|

||||

</details>

|

||||

|

||||

<details>

|

||||

|

||||

<summary>APK-based installation (Alpine)</summary>

|

||||

|

||||

**32 bit:**

|

||||

<!---x-release-please-start-version-->

|

||||

```

|

||||

curl -LO https://github.com/k8sgpt-ai/k8sgpt/releases/download/v0.3.18/k8sgpt_386.apk

|

||||

apk add k8sgpt_386.apk

|

||||

```

|

||||

<!---x-release-please-end-->

|

||||

**64 bit:**

|

||||

<!---x-release-please-start-version-->

|

||||

```

|

||||

curl -LO https://github.com/k8sgpt-ai/k8sgpt/releases/download/v0.3.18/k8sgpt_amd64.apk

|

||||

apk add k8sgpt_amd64.apk

|

||||

```

|

||||

<!---x-release-please-end-->x

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary>Failing Installation on WSL or Linux (missing gcc)</summary>

|

||||

When installing Homebrew on WSL or Linux, you may encounter the following error:

|

||||

|

||||

```

|

||||

==> Installing k8sgpt from k8sgpt-ai/k8sgpt Error: The following formula cannot be installed from a bottle and must be

|

||||

built from the source. k8sgpt Install Clang or run brew install gcc.

|

||||

```

|

||||

|

||||

If you install gcc as suggested, the problem will persist. Therefore, you need to install the build-essential package.

|

||||

```

|

||||

sudo apt-get update

|

||||

sudo apt-get install build-essential

|

||||

```

|

||||

</details>

|

||||

|

||||

|

||||

### Windows

|

||||

|

||||

* Download the latest Windows binaries of **k8sgpt** from the [Release](https://github.com/k8sgpt-ai/k8sgpt/releases)

|

||||

tab based on your system architecture.

|

||||

* Extract the downloaded package to your desired location. Configure the system *path* variable with the binary location

|

||||

|

||||

## Operator Installation

|

||||

|

||||

To install within a Kubernetes cluster please use our `k8sgpt-operator` with installation instructions available [here](https://github.com/k8sgpt-ai/k8sgpt-operator)

|

||||

|

||||

_This mode of operation is ideal for continuous monitoring of your cluster and can integrate with your existing monitoring such as Prometheus and Alertmanager._

|

||||

|

||||

|

||||

## Quick Start

|

||||

|

||||

* Currently the default AI provider is OpenAI, you will need to generate an API key from [OpenAI](https://openai.com)

|

||||

* You can do this by running `k8sgpt generate` to open a browser link to generate it

|

||||

* Run `k8sgpt auth add` to set it in k8sgpt.

|

||||

* You can provide the password directly using the `--password` flag.

|

||||

* Run `k8sgpt filters` to manage the active filters used by the analyzer. By default, all filters are executed during analysis.

|

||||

* Run `k8sgpt analyze` to run a scan.

|

||||

* And use `k8sgpt analyze --explain` to get a more detailed explanation of the issues.

|

||||

* You also run `k8sgpt analyze --with-doc` (with or without the explain flag) to get the official documentation from kubernetes.

|

||||

|

||||

## Analyzers

|

||||

|

||||

K8sGPT uses analyzers to triage and diagnose issues in your cluster. It has a set of analyzers that are built in, but

|

||||

you will be able to write your own analyzers.

|

||||

|

||||

### Built in analyzers

|

||||

|

||||

#### Enabled by default

|

||||

|

||||

- [x] podAnalyzer

|

||||

- [x] pvcAnalyzer

|

||||

- [x] rsAnalyzer

|

||||

- [x] serviceAnalyzer

|

||||

- [x] eventAnalyzer

|

||||

- [x] ingressAnalyzer

|

||||

- [x] statefulSetAnalyzer

|

||||

- [x] deploymentAnalyzer

|

||||

- [x] cronJobAnalyzer

|

||||

- [x] nodeAnalyzer

|

||||

- [x] mutatingWebhookAnalyzer

|

||||

- [x] validatingWebhookAnalyzer

|

||||

|

||||

#### Optional

|

||||

|

||||

- [x] hpaAnalyzer

|

||||

- [x] pdbAnalyzer

|

||||

- [x] networkPolicyAnalyzer

|

||||

|

||||

## Examples

|

||||

|

||||

_Run a scan with the default analyzers_

|

||||

|

||||

```

|

||||

k8sgpt generate

|

||||

k8sgpt auth add

|

||||

k8sgpt analyze --explain

|

||||

k8sgpt analyze --explain --with-doc

|

||||

```

|

||||

|

||||

_Filter on resource_

|

||||

|

||||

```

|

||||

k8sgpt analyze --explain --filter=Service

|

||||

```

|

||||

|

||||

_Filter by namespace_

|

||||

```

|

||||

k8sgpt analyze --explain --filter=Pod --namespace=default

|

||||

```

|

||||

|

||||

_Output to JSON_

|

||||

|

||||

```

|

||||

k8sgpt analyze --explain --filter=Service --output=json

|

||||

```

|

||||

|

||||

_Anonymize during explain_

|

||||

|

||||

```

|

||||

k8sgpt analyze --explain --filter=Service --output=json --anonymize

|

||||

```

|

||||

|

||||

<details>

|

||||

<summary> Using filters </summary>

|

||||

|

||||

_List filters_

|

||||

|

||||

```

|

||||

k8sgpt filters list

|

||||

```

|

||||

|

||||

_Add default filters_

|

||||

|

||||

```

|

||||

k8sgpt filters add [filter(s)]

|

||||

```

|

||||

|

||||

### Examples :

|

||||

|

||||

- Simple filter : `k8sgpt filters add Service`

|

||||

- Multiple filters : `k8sgpt filters add Ingress,Pod`

|

||||

|

||||

_Remove default filters_

|

||||

|

||||

```

|

||||

k8sgpt filters remove [filter(s)]

|

||||

```

|

||||

|

||||

### Examples :

|

||||

|

||||

- Simple filter : `k8sgpt filters remove Service`

|

||||

- Multiple filters : `k8sgpt filters remove Ingress,Pod`

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

|

||||

<summary> Additional commands </summary>

|

||||

|

||||

_List configured backends_

|

||||

|

||||

```

|

||||

k8sgpt auth list

|

||||

```

|

||||

|

||||

_Update configured backends_

|

||||

|

||||

```

|

||||

k8sgpt auth update $MY_BACKEND1,$MY_BACKEND2..

|

||||

```

|

||||

|

||||

_Remove configured backends_

|

||||

|

||||

```

|

||||

k8sgpt auth remove $MY_BACKEND1,$MY_BACKEND2..

|

||||

```

|

||||

|

||||

_List integrations_

|

||||

|

||||

```

|

||||

k8sgpt integrations list

|

||||

```

|

||||

|

||||

_Activate integrations_

|

||||

|

||||

```

|

||||

k8sgpt integrations activate [integration(s)]

|

||||

```

|

||||

|

||||

_Use integration_

|

||||

|

||||

```

|

||||

k8sgpt analyze --filter=[integration(s)]

|

||||

```

|

||||

|

||||

_Deactivate integrations_

|

||||

|

||||

```

|

||||

k8sgpt integrations deactivate [integration(s)]

|

||||

```

|

||||

|

||||

_Serve mode_

|

||||

|

||||

```

|

||||

k8sgpt serve

|

||||

```

|

||||

|

||||

_Analysis with serve mode_

|

||||

|

||||

```

|

||||

curl -X GET "http://localhost:8080/analyze?namespace=k8sgpt&explain=false"

|

||||

```

|

||||

</details>

|

||||

|

||||

|

||||

## Key Features

|

||||

|

||||

<details>

|

||||

<summary> LocalAI provider </summary>

|

||||

|

||||

To run local models, it is possible to use OpenAI compatible APIs, for instance [LocalAI](https://github.com/go-skynet/LocalAI) which uses [llama.cpp](https://github.com/ggerganov/llama.cpp) to run inference on consumer-grade hardware. Models supported by LocalAI for instance are Vicuna, Alpaca, LLaMA, Cerebras, GPT4ALL, GPT4ALL-J, Llama2 and koala.

|

||||

|

||||

|

||||

To run local inference, you need to download the models first, for instance you can find `gguf` compatible models in [huggingface.com](https://huggingface.co/models?search=gguf) (for example vicuna, alpaca and koala).

|

||||

|

||||

### Start the API server

|

||||

|

||||

To start the API server, follow the instruction in [LocalAI](https://localai.io/howtos/).

|

||||

|

||||

### Run k8sgpt

|

||||

|

||||

To run k8sgpt, run `k8sgpt auth add` with the `localai` backend:

|

||||

|

||||

```

|

||||

k8sgpt auth add --backend localai --model <model_name> --baseurl http://localhost:8080/v1 --temperature 0.7

|

||||

```

|

||||

|

||||

Now you can analyze with the `localai` backend:

|

||||

|

||||

```

|

||||

k8sgpt analyze --explain --backend localai

|

||||

```

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary>Setting a new default AI provider</summary>

|

||||

|

||||

There may be scenarios where you wish to have K8sGPT plugged into several default AI providers. In this case you may wish to use one as a new default, other than OpenAI which is the project default.

|

||||

|

||||

_To view available providers_

|

||||

|

||||

```

|

||||

k8sgpt auth list

|

||||

Default:

|

||||

> openai

|

||||

Active:

|

||||

> openai

|

||||

> azureopenai

|

||||

Unused:

|

||||

> localai

|

||||

> noopai

|

||||

|

||||

```

|

||||

|

||||

|

||||

_To set a new default provider_

|

||||

|

||||

```

|

||||

k8sgpt auth default -p azureopenai

|

||||

Default provider set to azureopenai

|

||||

```

|

||||

|

||||

|

||||

</details>

|

||||

|

||||

|

||||

<details>

|

||||

|

||||

With this option, the data is anonymized before being sent to the AI Backend. During the analysis execution, `k8sgpt` retrieves sensitive data (Kubernetes object names, labels, etc.). This data is masked when sent to the AI backend and replaced by a key that can be used to de-anonymize the data when the solution is returned to the user.

|

||||

|

||||

|

||||

<summary> Anonymization </summary>

|

||||

|

||||

1. Error reported during analysis:

|

||||

```bash

|

||||

Error: HorizontalPodAutoscaler uses StatefulSet/fake-deployment as ScaleTargetRef which does not exist.

|

||||

```

|

||||

|

||||

2. Payload sent to the AI backend:

|

||||

```bash

|

||||

Error: HorizontalPodAutoscaler uses StatefulSet/tGLcCRcHa1Ce5Rs as ScaleTargetRef which does not exist.

|

||||

```

|

||||

|

||||

3. Payload returned by the AI:

|

||||

```bash

|

||||

The Kubernetes system is trying to scale a StatefulSet named tGLcCRcHa1Ce5Rs using the HorizontalPodAutoscaler, but it cannot find the StatefulSet. The solution is to verify that the StatefulSet name is spelled correctly and exists in the same namespace as the HorizontalPodAutoscaler.

|

||||

```

|

||||

|

||||

4. Payload returned to the user:

|

||||

```bash

|

||||

The Kubernetes system is trying to scale a StatefulSet named fake-deployment using the HorizontalPodAutoscaler, but it cannot find the StatefulSet. The solution is to verify that the StatefulSet name is spelled correctly and exists in the same namespace as the HorizontalPodAutoscaler.

|

||||

```

|

||||

|

||||

Note: **Anonymization does not currently apply to events.**

|

||||

|

||||

### Further Details

|

||||

|

||||

**Anonymization does not currently apply to events.**

|

||||

|

||||

*In a few analysers like Pod, we feed to the AI backend the event messages which are not known beforehand thus we are not masking them for the **time being**.*

|

||||

|

||||

- The following is the list of analysers in which data is **being masked**:-

|

||||

|

||||

- Statefulset

|

||||

- Service

|

||||

- PodDisruptionBudget

|

||||

- Node

|

||||

- NetworkPolicy

|

||||

- Ingress

|

||||

- HPA

|

||||

- Deployment

|

||||

- Cronjob

|

||||

|

||||

- The following is the list of analysers in which data is **not being masked**:-

|

||||

|

||||

- RepicaSet

|

||||

- PersistentVolumeClaim

|

||||

- Pod

|

||||

- **_*Events_**

|

||||

|

||||

***Note**:

|

||||

- k8gpt will not mask the above analysers because they do not send any identifying information except **Events** analyser.

|

||||

- Masking for **Events** analyzer is scheduled in the near future as seen in this [issue](https://github.com/k8sgpt-ai/k8sgpt/issues/560). _Further research has to be made to understand the patterns and be able to mask the sensitive parts of an event like pod name, namespace etc._

|

||||

|

||||

- The following is the list of fields which are not **being masked**:-

|

||||

|

||||

- Describe

|

||||

- ObjectStatus

|

||||

- Replicas

|

||||

- ContainerStatus

|

||||

- **_*Event Message_**

|

||||

- ReplicaStatus

|

||||

- Count (Pod)

|

||||

|

||||

***Note**:

|

||||

- It is quite possible the payload of the event message might have something like "super-secret-project-pod-X crashed" which we don't currently redact _(scheduled in the near future as seen in this [issue](https://github.com/k8sgpt-ai/k8sgpt/issues/560))_.

|

||||

|

||||

### Proceed with care

|

||||

|

||||

- The K8gpt team recommends using an entirely different backend **(a local model) in critical production environments**. By using a local model, you can rest assured that everything stays within your DMZ, and nothing is leaked.

|

||||

- If there is any uncertainty about the possibility of sending data to a public LLM (open AI, Azure AI) and it poses a risk to business-critical operations, then, in such cases, the use of public LLM should be avoided based on personal assessment and the jurisdiction of risks involved.

|

||||

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary> Configuration management</summary>

|

||||

|

||||

`k8sgpt` stores config data in the `$XDG_CONFIG_HOME/k8sgpt/k8sgpt.yaml` file. The data is stored in plain text, including your OpenAI key.

|

||||

|

||||

Config file locations:

|

||||

| OS | Path |

|

||||

| ------- | ------------------------------------------------ |

|

||||

| MacOS | ~/Library/Application Support/k8sgpt/k8sgpt.yaml |

|

||||

| Linux | ~/.config/k8sgpt/k8sgpt.yaml |

|

||||

| Windows | %LOCALAPPDATA%/k8sgpt/k8sgpt.yaml |

|

||||

</details>

|

||||

|

||||

<details>

|

||||

There may be scenarios where caching remotely is preferred.

|

||||

In these scenarios K8sGPT supports AWS S3 Integration.

|

||||

|

||||

<summary> Remote caching </summary>

|

||||

|

||||

_As a prerequisite `AWS_ACCESS_KEY_ID` and `AWS_SECRET_ACCESS_KEY` are required as environmental variables._

|

||||

|

||||

_Adding a remote cache_

|

||||

|

||||

Note: this will create the bucket if it does not exist

|

||||

```

|

||||

k8sgpt cache add --region <aws region> --bucket <name>

|

||||

```

|

||||

|

||||

_Listing cache items_

|

||||

```

|

||||

k8sgpt cache list

|

||||

```

|

||||

|

||||

_Removing the remote cache_

|

||||

Note: this will not delete the bucket

|

||||

```

|

||||

k8sgpt cache remove --bucket <name>

|

||||

```

|

||||

</details>

|

||||

|

||||

|

||||

## Documentation

|

||||

|

||||

Find our official documentation available [here](https://docs.k8sgpt.ai)

|

||||

39

docs/content/integrations/Kairos.md

Normal file

39

docs/content/integrations/Kairos.md

Normal file

|

|

@ -0,0 +1,39 @@

|

|||

|

||||

+++

|

||||

disableToc = false

|

||||

title = "Kairos"

|

||||

weight = 2

|

||||

+++

|

||||

|

||||

|

||||

|

||||

[Kairos](https://github.com/kairos-io/kairos) - Kubernetes-focused, Cloud Native Linux meta-distribution

|

||||

|

||||

The immutable Linux meta-distribution for edge Kubernetes.

|

||||

|

||||

Github Link - https://github.com/kairos-io/kairos

|

||||

|

||||

## Intro

|

||||

|

||||

With Kairos you can build immutable, bootable Kubernetes and OS images for your edge devices as easily as writing a Dockerfile. Optional P2P mesh with distributed ledger automates node bootstrapping and coordination. Updating nodes is as easy as CI/CD: push a new image to your container registry and let secure, risk-free A/B atomic upgrades do the rest. Kairos is part of the Secure Edge-Native Architecture (SENA) to securely run workloads at the Edge ([whitepaper](https://github.com/kairos-io/kairos/files/11250843/Secure-Edge-Native-Architecture-white-paper-20240417.3.pdf)).

|

||||

|

||||

Kairos (formerly `c3os`) is an open-source project which brings Edge, cloud, and bare metal lifecycle OS management into the same design principles with a unified Cloud Native API.

|

||||

|

||||

## At-a-glance:

|

||||

|

||||

- :bowtie: Community Driven

|

||||

- :octocat: Open Source

|

||||

- :lock: Linux immutable, meta-distribution

|

||||

- :key: Secure

|

||||

- :whale: Container-based

|

||||

- :penguin: Distribution agnostic

|

||||

|

||||

## Kairos can be used to:

|

||||

|

||||

- Easily spin-up a Kubernetes cluster, with the Linux distribution of your choice :penguin:

|

||||

- Create your Immutable infrastructure, no more infrastructure drift! :lock:

|

||||

- Manage the cluster lifecycle with Kubernetes—from building to provisioning, and upgrading :rocket:

|

||||

- Create a multiple—node, a single cluster that spans up across regions :earth_africa:

|

||||

|

||||

For comprehensive docs, tutorials, and examples see our [documentation](https://kairos.io/docs/getting-started/).

|

||||

|

||||

60

docs/content/integrations/LLMStack.md

Normal file

60

docs/content/integrations/LLMStack.md

Normal file

|

|

@ -0,0 +1,60 @@

|

|||

|

||||

+++

|

||||

disableToc = false

|

||||

title = "LLMStack"

|

||||

weight = 2

|

||||

+++

|

||||

|

||||

|

||||

|

||||

[LLMStack](https://github.com/trypromptly/LLMStack) - LLMStack is a no-code platform for building generative AI applications, chatbots, agents and connecting them to your data and business processes.

|

||||

|

||||

Github Link - https://github.com/trypromptly/LLMStack

|

||||

|

||||

## Overview

|

||||

|

||||

Build tailor-made generative AI applications, chatbots and agents that cater to your unique needs by chaining multiple LLMs. Seamlessly integrate your own data and GPT-powered models without any coding experience using LLMStack's no-code builder. Trigger your AI chains from Slack or Discord. Deploy to the cloud or on-premise.

|

||||

|

||||

|

||||

|

||||

## Getting Started

|

||||

|

||||

LLMStack deployment comes with a default admin account whose credentials are `admin` and `promptly`. _Be sure to change the password from admin panel after logging in_.

|

||||

|

||||

## Features

|

||||

|

||||

**🔗 Chain multiple models**: LLMStack allows you to chain multiple LLMs together to build complex generative AI applications.

|

||||

|

||||

**📊 Use generative AI on your Data**: Import your data into your accounts and use it in AI chains. LLMStack allows importing various types (_CSV, TXT, PDF, DOCX, PPTX etc.,_) of data from a variety of sources (_gdrive, notion, websites, direct uploads etc.,_). Platform will take care of preprocessing and vectorization of your data and store it in the vector database that is provided out of the box.

|

||||

|

||||

**🛠️ No-code builder**: LLMStack comes with a no-code builder that allows you to build AI chains without any coding experience. You can chain multiple LLMs together and connect them to your data and business processes.

|

||||

|

||||

**☁️ Deploy to the cloud or on-premise**: LLMStack can be deployed to the cloud or on-premise. You can deploy it to your own infrastructure or use our cloud offering at [Promptly](https://trypromptly.com).

|

||||

|

||||

**🚀 API access**: Apps or chatbots built with LLMStack can be accessed via HTTP API. You can also trigger your AI chains from **_Slack_** or **_Discord_**.

|

||||

|

||||

**🏢 Multi-tenant**: LLMStack is multi-tenant. You can create multiple organizations and add users to them. Users can only access the data and AI chains that belong to their organization.

|

||||

|

||||

## What can you build with LLMStack?

|

||||

|

||||

Using LLMStack you can build a variety of generative AI applications, chatbots and agents. Here are some examples:

|

||||

|

||||

**📝 Text generation**: You can build apps that generate product descriptions, blog posts, news articles, tweets, emails, chat messages, etc., by using text generation models and optionally connecting your data. Check out this [marketing content generator](https://trypromptly.com/app/50ee8bae-712e-4b95-9254-74d7bcf3f0cb) for example

|

||||

|

||||

**🤖 Chatbots**: You can build chatbots trained on your data powered by ChatGPT like [Promptly Help](https://trypromptly.com/app/f4d7cb50-1805-4add-80c5-e30334bce53c) that is embedded on Promptly website

|

||||

|

||||

**🎨 Multimedia generation**: Build complex applications that can generate text, images, videos, audio, etc. from a prompt. This [story generator](https://trypromptly.com/app/9d6da897-67cf-4887-94ec-afd4b9362655) is an example

|

||||

|

||||

**🗣️ Conversational AI**: Build conversational AI systems that can have a conversation with a user. Check out this [Harry Potter character chatbot](https://trypromptly.com/app/bdeb9850-b32e-44cf-b2a8-e5d54dc5fba4)

|

||||

|

||||

**🔍 Search augmentation**: Build search augmentation systems that can augment search results with additional information using APIs. Sharebird uses LLMStack to augment search results with AI generated answer from their content similar to Bing's chatbot

|

||||

|

||||

**💬 Discord and Slack bots**: Apps built on LLMStack can be triggered from Slack or Discord. You can easily connect your AI chains to Slack or Discord from LLMStack's no-code app editor. Check out our [Discord server](https://discord.gg/3JsEzSXspJ) to interact with one such bot.

|

||||

|

||||

## Administration

|

||||

|

||||

Login to [http://localhost:3000/admin](http://localhost:3000/admin) using the admin account. You can add users and assign them to organizations in the admin panel.

|

||||

|

||||

## Documentation

|

||||

|

||||

Check out our documentation at [llmstack.ai/docs](https://llmstack.ai/docs/) to learn more about LLMStack.

|

||||

74

docs/content/integrations/LinGoose.md

Normal file

74

docs/content/integrations/LinGoose.md

Normal file

|

|

@ -0,0 +1,74 @@

|

|||

|

||||

+++

|

||||

disableToc = false

|

||||

title = "LinGoose"

|

||||

weight = 2

|

||||

+++

|

||||

|

||||

**LinGoose** (_Lingo + Go + Goose_ 🪿) aims to be a complete Go framework for creating LLM apps. 🤖 ⚙️

|

||||

|

||||

|

||||

|

||||

Github Link - https://github.com/henomis/lingoose

|

||||

|

||||

## Overview

|

||||

|

||||

**LinGoose** is a powerful Go framework for developing Large Language Model (LLM) based applications using pipelines. It is designed to be a complete solution and provides multiple components, including Prompts, Templates, Chat, Output Decoders, LLM, Pipelines, and Memory. With **LinGoose**, you can interact with LLM AI through prompts and generate complex templates. Additionally, it includes a chat feature, allowing you to create chatbots. The Output Decoders component enables you to extract specific information from the output of the LLM, while the LLM interface allows you to send prompts to various AI, such as the ones provided by OpenAI. You can chain multiple LLM steps together using Pipelines and store the output of each step in Memory for later retrieval. **LinGoose** also includes a Document component, which is used to store text, and a Loader component, which is used to load Documents from various sources. Finally, it includes TextSplitters, which are used to split text or Documents into multiple parts, Embedders, which are used to embed text or Documents into embeddings, and Indexes, which are used to store embeddings and documents and to perform searches.

|

||||

|

||||

## Components

|

||||

|

||||

**LinGoose** is composed of multiple components, each one with its own purpose.

|

||||

|

||||

| Component | Package | Description |

|

||||

| ----------------- | ----------------------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------ |

|

||||

| **Prompt** | [prompt](prompt/) | Prompts are the way to interact with LLM AI. They can be simple text, or more complex templates. Supports **Prompt Templates** and **[Whisper](https://openai.com) prompt** |

|

||||

| **Chat Prompt** | [chat](chat/) | Chat is the way to interact with the chat LLM AI. It can be a simple text prompt, or a more complex chatbot. |

|

||||

| **Decoders** | [decoder](decoder/) | Output decoders are used to decode the output of the LLM. They can be used to extract specific information from the output. Supports **JSONDecoder** and **RegExDecoder** |

|

||||

| **LLMs** | [llm](llm/) | LLM is an interface to various AI such as the ones provided by OpenAI. It is responsible for sending the prompt to the AI and retrieving the output. Supports **[LocalAI](https://localai.io/howtos/)**, **[HuggingFace](https://huggingface.co)** and **[Llama.cpp](https://github.com/ggerganov/llama.cpp)**. |

|

||||

| **Pipelines** | [pipeline](pipeline/) | Pipelines are used to chain multiple LLM steps together. |

|

||||

| **Memory** | [memory](memory/) | Memory is used to store the output of each step. It can be used to retrieve the output of a previous step. Supports memory in **Ram** |

|

||||

| **Document** | [document](document/) | Document is used to store a text |

|

||||

| **Loaders** | [loader](loader/) | Loaders are used to load Documents from various sources. Supports **TextLoader**, **DirectoryLoader**, **PDFToTextLoader** and **PubMedLoader** . |

|

||||

| **TextSplitters** | [textsplitter](textsplitter/) | TextSplitters are used to split text or Documents into multiple parts. Supports **RecursiveTextSplitter**. |

|

||||

| **Embedders** | [embedder](embedder/) | Embedders are used to embed text or Documents into embeddings. Supports **[OpenAI](https://openai.com)** |

|

||||

| **Indexes** | [index](index/) | Indexes are used to store embeddings and documents and to perform searches. Supports **SimpleVectorIndex**, **[Pinecone](https://pinecone.io)** and **[Qdrant](https://qdrant.tech)** |

|

||||

|

||||

## Usage

|

||||

|

||||

Please refer to the documentation at [lingoose.io](https://lingoose.io/docs/) to understand how to use LinGoose. If you prefer the 👉 [examples directory](examples/) contains a lot of examples 🚀.

|

||||

However, here is a **powerful** example of what **LinGoose** is capable of:

|

||||

|

||||

_Talk is cheap. Show me the [code](examples/)._ - Linus Torvalds

|

||||

|

||||

```go

|

||||

package main

|

||||

|

||||

import (

|

||||

"context"

|

||||

|

||||

openaiembedder "github.com/henomis/lingoose/embedder/openai"

|

||||

"github.com/henomis/lingoose/index/option"

|

||||

simplevectorindex "github.com/henomis/lingoose/index/simpleVectorIndex"

|

||||

"github.com/henomis/lingoose/llm/openai"

|

||||

"github.com/henomis/lingoose/loader"

|

||||

qapipeline "github.com/henomis/lingoose/pipeline/qa"

|

||||

"github.com/henomis/lingoose/textsplitter"

|

||||

)

|

||||

|

||||

func main() {

|

||||

docs, _ := loader.NewPDFToTextLoader("./kb").WithPDFToTextPath("/opt/homebrew/bin/pdftotext").WithTextSplitter(textsplitter.NewRecursiveCharacterTextSplitter(2000, 200)).Load(context.Background())

|

||||

index := simplevectorindex.New("db", ".", openaiembedder.New(openaiembedder.AdaEmbeddingV2))

|

||||

index.LoadFromDocuments(context.Background(), docs)

|

||||

qapipeline.New(openai.NewChat().WithVerbose(true)).WithIndex(index).Query(context.Background(), "What is the NATO purpose?", option.WithTopK(1))

|

||||

}

|

||||

```

|

||||

|

||||

This is the _famous_ 4-lines **lingoose** knowledge base chatbot. 🤖

|

||||

|

||||

## Installation

|

||||

|

||||

Be sure to have a working Go environment, then run the following command:

|

||||

|

||||

```shell

|

||||

go get github.com/henomis/lingoose

|

||||

```

|

||||

174

docs/content/integrations/LocalAGI.md

Normal file

174

docs/content/integrations/LocalAGI.md

Normal file

|

|

@ -0,0 +1,174 @@

|

|||

|

||||

+++

|

||||

disableToc = false

|

||||

title = "LocalAGI"

|

||||

weight = 2

|

||||

+++

|

||||

|

||||

LocalAGI is a small 🤖 virtual assistant that you can run locally, made by the [LocalAI](https://github.com/go-skynet/LocalAI) author and powered by it.

|

||||

|

||||

|

||||

|

||||

[AutoGPT](https://github.com/Significant-Gravitas/Auto-GPT), [babyAGI](https://github.com/yoheinakajima/babyagi), ... and now LocalAGI!

|

||||

|

||||

Github Link - https://github.com/mudler/LocalAGI

|

||||

|

||||

## Info

|

||||

|

||||

The goal is:

|

||||

- Keep it simple, hackable and easy to understand

|

||||

- No API keys needed, No cloud services needed, 100% Local. Tailored for Local use, however still compatible with OpenAI.

|

||||

- Smart-agent/virtual assistant that can do tasks

|

||||

- Small set of dependencies

|

||||

- Run with Docker/Podman/Containers

|

||||

- Rather than trying to do everything, provide a good starting point for other projects

|

||||

|

||||

Note: Be warned! It was hacked in a weekend, and it's just an experiment to see what can be done with local LLMs.

|

||||

|

||||

|

||||

|

||||

## 🚀 Features

|

||||

|

||||

- 🧠 LLM for intent detection

|

||||

- 🧠 Uses functions for actions

|

||||

- 📝 Write to long-term memory

|

||||

- 📖 Read from long-term memory

|

||||

- 🌐 Internet access for search

|

||||

- :card_file_box: Write files

|

||||

- 🔌 Plan steps to achieve a goal

|

||||

- 🤖 Avatar creation with Stable Diffusion

|

||||

- 🗨️ Conversational

|

||||

- 🗣️ Voice synthesis with TTS

|

||||

|

||||

## :book: Quick start

|

||||

|

||||

No frills, just run docker-compose and start chatting with your virtual assistant:

|

||||

|

||||

```bash

|

||||

# Modify the configuration

|

||||

# nano .env

|

||||

docker-compose run -i --rm localagi

|

||||

```

|

||||

|

||||

## How to use it

|

||||

|

||||

By default localagi starts in interactive mode

|

||||

|

||||

### Examples

|

||||

|

||||

Road trip planner by limiting searching to internet to 3 results only:

|

||||

|

||||

```bash

|

||||

docker-compose run -i --rm localagi \

|

||||

--skip-avatar \

|

||||

--subtask-context \

|

||||

--postprocess \

|

||||

--search-results 3 \

|

||||

--prompt "prepare a plan for my roadtrip to san francisco"

|

||||

```

|

||||

|

||||

Limit results of planning to 3 steps:

|

||||

|

||||

```bash

|

||||

docker-compose run -i --rm localagi \

|

||||

--skip-avatar \

|

||||

--subtask-context \

|

||||

--postprocess \

|

||||

--search-results 1 \

|

||||

--prompt "do a plan for my roadtrip to san francisco" \

|

||||

--plan-message "The assistant replies with a plan of 3 steps to answer the request with a list of subtasks with logical steps. The reasoning includes a self-contained, detailed and descriptive instruction to fullfill the task."

|

||||

```

|

||||

|

||||

### Advanced

|

||||

|

||||

localagi has several options in the CLI to tweak the experience:

|

||||

|

||||

- `--system-prompt` is the system prompt to use. If not specified, it will use none.

|

||||

- `--prompt` is the prompt to use for batch mode. If not specified, it will default to interactive mode.

|

||||

- `--interactive` is the interactive mode. When used with `--prompt` will drop you in an interactive session after the first prompt is evaluated.

|

||||

- `--skip-avatar` will skip avatar creation. Useful if you want to run it in a headless environment.

|

||||

- `--re-evaluate` will re-evaluate if another action is needed or we have completed the user request.

|

||||

- `--postprocess` will postprocess the reasoning for analysis.

|

||||

- `--subtask-context` will include context in subtasks.

|

||||

- `--search-results` is the number of search results to use.

|

||||

- `--plan-message` is the message to use during planning. You can override the message for example to force a plan to have a different message.

|

||||

- `--tts-api-base` is the TTS API base. Defaults to `http://api:8080`.

|

||||

- `--localai-api-base` is the LocalAI API base. Defaults to `http://api:8080`.

|

||||

- `--images-api-base` is the Images API base. Defaults to `http://api:8080`.

|

||||

- `--embeddings-api-base` is the Embeddings API base. Defaults to `http://api:8080`.

|

||||

- `--functions-model` is the functions model to use. Defaults to `functions`.

|

||||

- `--embeddings-model` is the embeddings model to use. Defaults to `all-MiniLM-L6-v2`.

|

||||

- `--llm-model` is the LLM model to use. Defaults to `gpt-4`.

|

||||

- `--tts-model` is the TTS model to use. Defaults to `en-us-kathleen-low.onnx`.

|

||||

- `--stablediffusion-model` is the Stable Diffusion model to use. Defaults to `stablediffusion`.

|

||||

- `--stablediffusion-prompt` is the Stable Diffusion prompt to use. Defaults to `DEFAULT_PROMPT`.

|

||||

- `--force-action` will force a specific action.

|

||||

- `--debug` will enable debug mode.

|

||||

|

||||

### Customize

|

||||

|

||||

To use a different model, you can see the examples in the `config` folder.

|

||||

To select a model, modify the `.env` file and change the `PRELOAD_MODELS_CONFIG` variable to use a different configuration file.

|

||||

|

||||

### Caveats

|

||||

|

||||

The "goodness" of a model has a big impact on how LocalAGI works. Currently `13b` models are powerful enough to actually able to perform multi-step tasks or do more actions. However, it is quite slow when running on CPU (no big surprise here).

|

||||

|

||||

The context size is a limitation - you can find in the `config` examples to run with superhot 8k context size, but the quality is not good enough to perform complex tasks.

|

||||

|

||||

## What is LocalAGI?

|

||||

|

||||

It is a dead simple experiment to show how to tie the various LocalAI functionalities to create a virtual assistant that can do tasks. It is simple on purpose, trying to be minimalistic and easy to understand and customize for everyone.

|

||||

|

||||

It is different from babyAGI or AutoGPT as it uses [LocalAI functions](https://localai.io/features/openai-functions/) - it is a from scratch attempt built on purpose to run locally with [LocalAI](https://localai.io) (no API keys needed!) instead of expensive, cloud services. It sets apart from other projects as it strives to be small, and easy to fork on.

|

||||

|

||||

### How it works?

|

||||

|

||||

`LocalAGI` just does the minimal around LocalAI functions to create a virtual assistant that can do generic tasks. It works by an endless loop of `intent detection`, `function invocation`, `self-evaluation` and `reply generation` (if it decides to reply! :)). The agent is capable of planning complex tasks by invoking multiple functions, and remember things from the conversation.

|

||||

|

||||

In a nutshell, it goes like this:

|

||||

|

||||

- Decide based on the conversation history if it needs to take an action by using functions. It uses the LLM to detect the intent from the conversation.

|

||||

- if it need to take an action (e.g. "remember something from the conversation" ) or generate complex tasks ( executing a chain of functions to achieve a goal ) it invokes the functions

|

||||

- it re-evaluates if it needs to do any other action

|

||||

- return the result back to the LLM to generate a reply for the user

|

||||

|

||||

Under the hood LocalAI converts functions to llama.cpp BNF grammars. While OpenAI fine-tuned a model to reply to functions, LocalAI constrains the LLM to follow grammars. This is a much more efficient way to do it, and it is also more flexible as you can define your own functions and grammars. For learning more about this, check out the [LocalAI documentation](https://localai.io/docs/llm) and my tweet that explains how it works under the hoods: https://twitter.com/mudler_it/status/1675524071457533953.

|

||||

|

||||

### Agent functions

|

||||

|

||||

The intention of this project is to keep the agent minimal, so can be built on top of it or forked. The agent is capable of doing the following functions:

|

||||

- remember something from the conversation

|

||||

- recall something from the conversation

|

||||

- search something from the internet

|

||||

- plan a complex task by invoking multiple functions

|

||||

- write files to disk

|

||||

|

||||

## Roadmap

|

||||

|

||||

- [x] 100% Local, with Local AI. NO API KEYS NEEDED!

|

||||

- [x] Create a simple virtual assistant

|

||||

- [x] Make the virtual assistant do functions like store long-term memory and autonomously search between them when needed

|

||||

- [x] Create the assistant avatar with Stable Diffusion

|

||||

- [x] Give it a voice

|

||||

- [ ] Use weaviate instead of Chroma

|

||||

- [ ] Get voice input (push to talk or wakeword)

|

||||

- [ ] Make a REST API (OpenAI compliant?) so can be plugged by e.g. a third party service

|

||||

- [x] Take a system prompt so can act with a "character" (e.g. "answer in rick and morty style")

|

||||

|

||||

## Development

|

||||

|

||||

Run docker-compose with main.py checked-out:

|

||||

|

||||

```bash

|

||||

docker-compose run -v main.py:/app/main.py -i --rm localagi

|

||||

```

|

||||

|

||||

## Notes

|

||||

|

||||

- a 13b model is enough for doing contextualized research and search/retrieve memory

|

||||

- a 30b model is enough to generate a roadmap trip plan ( so cool! )

|

||||

- With superhot models looses its magic, but maybe suitable for search

|

||||

- Context size is your enemy. `--postprocess` some times helps, but not always

|

||||

- It can be silly!

|

||||

- It is slow on CPU, don't expect `7b` models to perform good, and `13b` models perform better but on CPU are quite slow.

|

||||

84

docs/content/integrations/Mattermost-OpenOps.md

Normal file

84

docs/content/integrations/Mattermost-OpenOps.md

Normal file

|

|

@ -0,0 +1,84 @@

|

|||

|

||||

+++

|

||||

disableToc = false

|

||||

title = "Mattermost-OpenOps"

|

||||

weight = 2

|

||||

+++

|

||||

|

||||

OpenOps is an open source platform for applying generative AI to workflows in secure environments.

|

||||

|

||||

|

||||

|

||||

Github Link - https://github.com/mattermost/openops

|

||||

|

||||

* Enables AI exploration with full data control in a multi-user pilot.

|

||||

* Supports broad ecosystem of AI models from OpenAI and Microsoft to open source LLMs from Hugging Face.

|

||||

* Speeds development of custom security, compliance and data custody policy from early evaluation to future scale.

|

||||

|

||||

Unliked closed source, vendor-controlled environments where data controls cannot be audited, OpenOps provides a transparent, open source, customer-controlled platform for developing, securing and auditing AI-accelerated workflows.

|

||||

|

||||

### Why Open Ops?

|

||||

|

||||

Everyone is in a race to deploy generative AI solutions, but need to do so in a responsible and safe way. OpenOps lets you run powerful models in a safe sandbox to establish the right safety protocols before rolling out to users. Here's an example of an evaluation, implementation, and iterative rollout process:

|

||||

|

||||

- **Phase 1:** Set up the OpenOps collaboration sandbox, a self-hosted service providing multi-user chat and integration with GenAI. *(this repository)*

|

||||

|

||||

- **Phase 2:** Evaluate different GenAI providers, whether from public SaaS services like OpenAI or local open source models, based on your security and privacy requirements.

|

||||

|

||||

- **Phase 3:** Invite select early adopters (especially colleagues focusing on trust and safety) to explore and evaluate the GenAI based on their workflows. Observe behavior, and record user feedback, and identify issues. Iterate on workflows and usage policies together in the sandbox. Consider issues such as data leakage, legal/copyright, privacy, response correctness and appropriateness as you apply AI at scale.

|

||||

|

||||

- **Phase 4:** Set and implement policies as availability is incrementally rolled out to your wider organization.

|

||||

|

||||

### What does OpenOps include?

|

||||

|

||||

Deploying the OpenOps sandbox includes the following components:

|

||||

- 🏰 **Mattermost Server** - Open source, self-hosted alternative to Discord and Slack for strict security environments with playbooks/workflow automation, tools integration, real time 1-1 and group messaging, audio calling and screenshare.

|

||||

- 📙 **PostgreSQL** - Database for storing private data from multi-user, chat collaboration discussions and audit history.

|

||||

- 🤖 [**Mattermost AI plugin**](https://github.com/mattermost/mattermost-plugin-ai) - Extension of Mattermost platform for AI bot and generative AI integration.

|

||||

- 🦙 **Open Source, Self-Hosted LLM models** - Models for evaluation and use case development from Hugging Face and other sources, including GPT4All (runs on a laptop in 4.2 GB) and Falcon LLM (example of leading scaled self-hosted models). Uses [LocalAI](https://github.com/go-skynet/LocalAI).

|

||||

- 🔌🧠 ***(Configurable)* Closed Source, Vendor-Hosted AI models** - SaaS-based GenAI models from Azure AI, OpenAI, & Anthropic.

|

||||

- 🔌📱 ***(Configurable)* Mattermost Mobile and Desktop Apps** - End-user apps for future production deployment.

|

||||

|

||||

## Install

|

||||

|

||||

### Local

|

||||

|

||||

***Rather watch a video?** 📽️ Check out our YouTube tutorial video for getting started with OpenOps: https://www.youtube.com/watch?v=20KSKBzZmik*

|

||||

|

||||

***Rather read a blog post?** 📝 Check out our Mattermost blog post for getting started with OpenOps: https://mattermost.com/blog/open-source-ai-framework/*

|

||||

|

||||

1. Clone the repository: `git clone https://github.com/mattermost/openops && cd openops`

|

||||

2. Start docker services and configure plugin

|

||||

- **If using OpenAI:**

|

||||

- Run `env backend=openai ./init.sh`

|

||||

- Run `./configure_openai.sh sk-<your openai key>` to add your API credentials *or* use the Mattermost system console to configure the plugin

|

||||

- **If using LocalAI:**

|

||||

- Run `env backend=localai ./init.sh`

|

||||

- Run `env backend=localai ./download_model.sh` to download one *or* supply your own gguf formatted model in the `models` directory.

|

||||

3. Access Mattermost and log in with the credentials provided in the terminal.

|

||||

|

||||

When you log in, you will start out in a direct message with your AI Assistant bot. Now you can start exploring AI [usages](#usage).

|

||||

|

||||

### Gitpod

|

||||

[](https://gitpod.io/#backend=openai/https://github.com/mattermost/openops)

|

||||

|

||||

1. Click the above badge and start your Gitpod workspace

|

||||

2. You will see VSCode interface and the workspace will configure itself automatically. Wait for the services to start and for your `root` login for Mattermost to be generated in the terminal

|

||||

3. Run `./configure_openai.sh sk-<your openai key>` to add your API credentials *or* use the Mattermost system console to configure the plugin

|

||||

4. Access Mattermost and log in with the credentials supplied in the terminal.

|

||||

|

||||

When you log in, you will start out in a direct message with your AI Assistant bot. Now you can start exploring AI [usages](#usage).

|

||||

|

||||

## Usage

|

||||

|

||||

There many ways to integrate generative AI into confidential, self-hosted workplace discussions. To help you get started, here are some examples provided in OpenOps:

|

||||

|

||||

| Title | Image | Description |

|

||||

| ---------------------------------------------- | -------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

|

||||

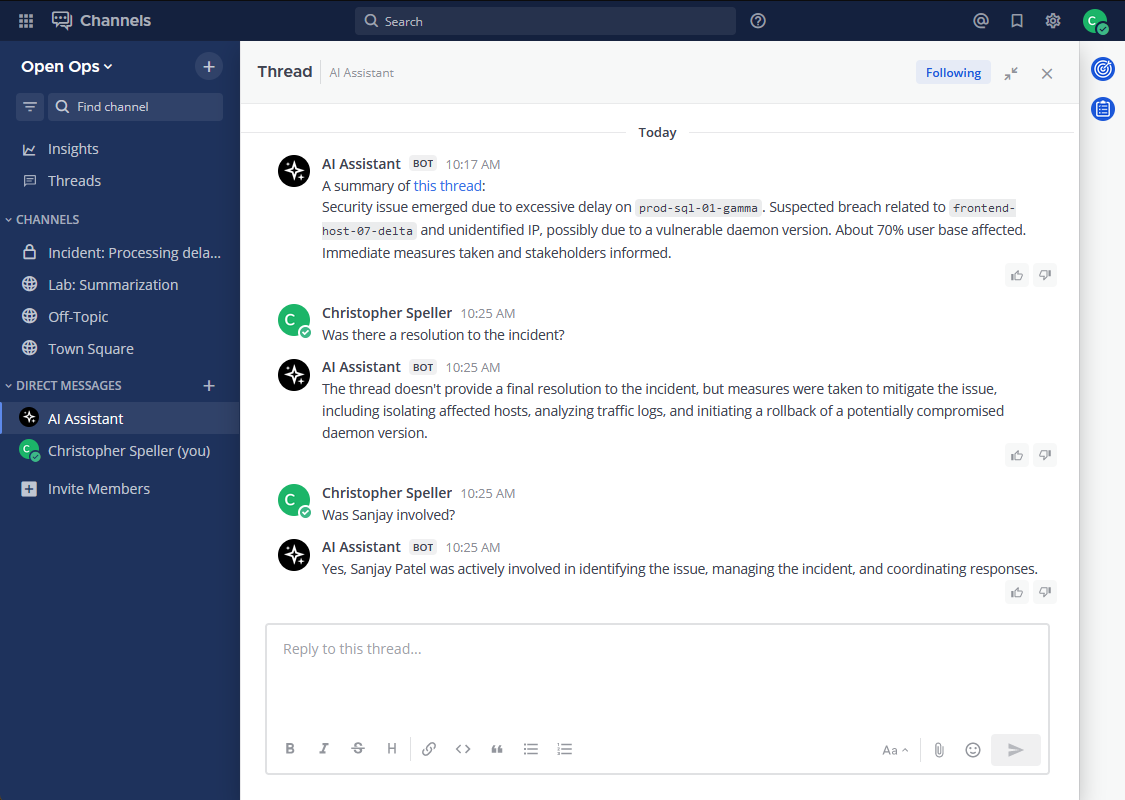

| **Streaming Conversation** |  | The OpenOps platform reproduces streamed replies from popular GenAI chatbots creating a sense of responsiveness and conversational engagement, while masking actual wait times. |

|

||||

| **Thread Summarization** |  | Use the "Summarize Thread" menu option or the `/summarize` command to get a summary of the thread in a Direct Message from an AI bot. AI-generated summaries can be created from private, chat-based discussions to speed information flows and decision-making while reducing the time and cost required for organizations to stay up-to-date. |

|

||||