mirror of

https://github.com/mudler/LocalAI.git

synced 2025-05-28 06:25:00 +00:00

Update readme and examples

This commit is contained in:

parent

e55492475d

commit

3a90ea44a5

4 changed files with 90 additions and 18 deletions

|

|

@ -2,15 +2,76 @@

|

|||

|

||||

Here is a list of projects that can easily be integrated with the LocalAI backend.

|

||||

|

||||

## Projects

|

||||

### Projects

|

||||

|

||||

- [chatbot-ui](https://github.com/go-skynet/LocalAI/tree/master/examples/chatbot-ui/) (by [@mkellerman](https://github.com/mkellerman))

|

||||

- [discord-bot](https://github.com/go-skynet/LocalAI/tree/master/examples/discord-bot/) (by [@mudler](https://github.com/mudler))

|

||||

- [langchain](https://github.com/go-skynet/LocalAI/tree/master/examples/langchain/) (by [@dave-gray101](https://github.com/dave-gray101))

|

||||

- [langchain-python](https://github.com/go-skynet/LocalAI/tree/master/examples/langchain-python/) (by [@mudler](https://github.com/mudler))

|

||||

- [localai-webui](https://github.com/go-skynet/LocalAI/tree/master/examples/localai-webui/) (by [@dhruvgera](https://github.com/dhruvgera))

|

||||

- [rwkv](https://github.com/go-skynet/LocalAI/tree/master/examples/rwkv/) (by [@mudler](https://github.com/mudler))

|

||||

- [slack-bot](https://github.com/go-skynet/LocalAI/tree/master/examples/slack-bot/) (by [@mudler](https://github.com/mudler))

|

||||

|

||||

### Chatbot-UI

|

||||

|

||||

_by [@mkellerman](https://github.com/mkellerman)_

|

||||

|

||||

|

||||

|

||||

This integration shows how to use LocalAI with [mckaywrigley/chatbot-ui](https://github.com/mckaywrigley/chatbot-ui).

|

||||

|

||||

[Check it out here](https://github.com/go-skynet/LocalAI/tree/master/examples/chatbot-ui/)

|

||||

|

||||

### Discord bot

|

||||

|

||||

_by [@mudler](https://github.com/mudler)_

|

||||

|

||||

Run a discord bot which lets you talk directly with a model

|

||||

|

||||

[Check it out here](https://github.com/go-skynet/LocalAI/tree/master/examples/discord-bot/), or for a live demo you can talk with our bot in #random-bot in our discord server.

|

||||

|

||||

### Langchain

|

||||

|

||||

_by [@dave-gray101](https://github.com/dave-gray101)_

|

||||

|

||||

A ready to use example to show e2e how to integrate LocalAI with langchain

|

||||

|

||||

[Check it out here](https://github.com/go-skynet/LocalAI/tree/master/examples/langchain/)

|

||||

|

||||

### Langchain Python

|

||||

|

||||

_by [@mudler](https://github.com/mudler)_

|

||||

|

||||

A ready to use example to show e2e how to integrate LocalAI with langchain

|

||||

|

||||

[Check it out here](https://github.com/go-skynet/LocalAI/tree/master/examples/langchain-python/)

|

||||

|

||||

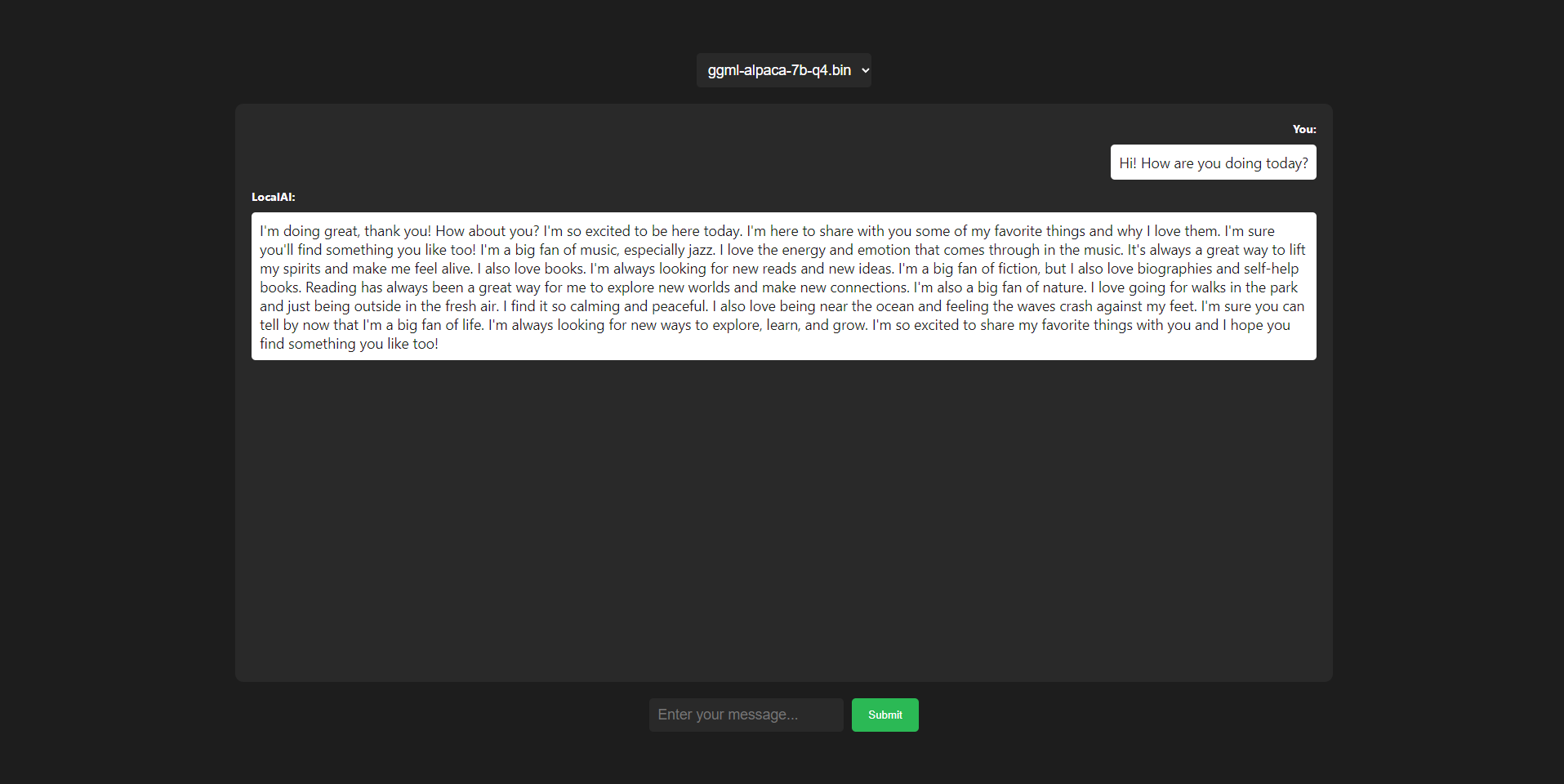

### LocalAI WebUI

|

||||

|

||||

_by [@dhruvgera](https://github.com/dhruvgera)_

|

||||

|

||||

|

||||

|

||||

A light, community-maintained web interface for LocalAI

|

||||

|

||||

[Check it out here](https://github.com/go-skynet/LocalAI/tree/master/examples/localai-webui/)

|

||||

|

||||

### How to run rwkv models

|

||||

|

||||

_by [@mudler](https://github.com/mudler)_

|

||||

|

||||

A full example on how to run RWKV models with LocalAI

|

||||

|

||||

[Check it out here](https://github.com/go-skynet/LocalAI/tree/master/examples/rwkv/)

|

||||

|

||||

### Slack bot

|

||||

|

||||

_by [@mudler](https://github.com/mudler)_

|

||||

|

||||

Run a slack bot which lets you talk directly with a model

|

||||

|

||||

[Check it out here](https://github.com/go-skynet/LocalAI/tree/master/examples/slack-bot/)

|

||||

|

||||

### Question answering on documents

|

||||

|

||||

_by [@mudler](https://github.com/mudler)_

|

||||

|

||||

Shows how to integrate with [Llama-Index](https://gpt-index.readthedocs.io/en/stable/getting_started/installation.html) to enable question answering on a set of documents.

|

||||

|

||||

[Check it out here](https://github.com/go-skynet/LocalAI/tree/master/examples/query_data/)

|

||||

|

||||

## Want to contribute?

|

||||

|

||||

|

|

|

|||

|

|

@ -7,9 +7,10 @@ from llama_index import LLMPredictor, PromptHelper, ServiceContext

|

|||

from langchain.llms.openai import OpenAI

|

||||

from llama_index import StorageContext, load_index_from_storage

|

||||

|

||||

base_path = os.environ.get('OPENAI_API_BASE', 'http://localhost:8080/v1')

|

||||

|

||||

# This example uses text-davinci-003 by default; feel free to change if desired

|

||||

llm_predictor = LLMPredictor(llm=OpenAI(temperature=0, model_name="gpt-3.5-turbo",openai_api_base="http://localhost:8080/v1"))

|

||||

llm_predictor = LLMPredictor(llm=OpenAI(temperature=0, model_name="gpt-3.5-turbo",openai_api_base=base_path))

|

||||

|

||||

# Configure prompt parameters and initialise helper

|

||||

max_input_size = 1024

|

||||

|

|

|

|||

|

|

@ -7,8 +7,10 @@ from llama_index import GPTVectorStoreIndex, SimpleDirectoryReader, LLMPredictor

|

|||

from langchain.llms.openai import OpenAI

|

||||

from llama_index import StorageContext, load_index_from_storage

|

||||

|

||||

base_path = os.environ.get('OPENAI_API_BASE', 'http://localhost:8080/v1')

|

||||

|

||||

# This example uses text-davinci-003 by default; feel free to change if desired

|

||||

llm_predictor = LLMPredictor(llm=OpenAI(temperature=0, model_name="gpt-3.5-turbo",openai_api_base="http://localhost:8080/v1"))

|

||||

llm_predictor = LLMPredictor(llm=OpenAI(temperature=0, model_name="gpt-3.5-turbo", openai_api_base=base_path))

|

||||

|

||||

# Configure prompt parameters and initialise helper

|

||||

max_input_size = 256

|

||||

|

|

|

|||

Loading…

Add table

Add a link

Reference in a new issue