mirror of

https://github.com/mudler/LocalAI.git

synced 2025-05-20 10:35:01 +00:00

* feat(sycl): Add sycl support (#1647) * onekit: install without prompts * set cmake args only in grpc-server Signed-off-by: Ettore Di Giacinto <mudler@localai.io> * cleanup * fixup sycl source env * Cleanup docs * ci: runs on self-hosted * fix typo * bump llama.cpp * llama.cpp: update server * adapt to upstream changes * adapt to upstream changes * docs: add sycl --------- Signed-off-by: Ettore Di Giacinto <mudler@localai.io>

This commit is contained in:

parent

16cebf0390

commit

1c57f8d077

13 changed files with 932 additions and 770 deletions

|

|

@ -15,9 +15,45 @@ This section contains instruction on how to use LocalAI with GPU acceleration.

|

|||

For accelleration for AMD or Metal HW there are no specific container images, see the [build]({{%relref "docs/getting-started/build#Acceleration" %}})

|

||||

{{% /alert %}}

|

||||

|

||||

### CUDA(NVIDIA) acceleration

|

||||

|

||||

#### Requirements

|

||||

## Model configuration

|

||||

|

||||

Depending on the model architecture and backend used, there might be different ways to enable GPU acceleration. It is required to configure the model you intend to use with a YAML config file. For example, for `llama.cpp` workloads a configuration file might look like this (where `gpu_layers` is the number of layers to offload to the GPU):

|

||||

|

||||

```yaml

|

||||

name: my-model-name

|

||||

# Default model parameters

|

||||

parameters:

|

||||

# Relative to the models path

|

||||

model: llama.cpp-model.ggmlv3.q5_K_M.bin

|

||||

|

||||

context_size: 1024

|

||||

threads: 1

|

||||

|

||||

f16: true # enable with GPU acceleration

|

||||

gpu_layers: 22 # GPU Layers (only used when built with cublas)

|

||||

|

||||

```

|

||||

|

||||

For diffusers instead, it might look like this instead:

|

||||

|

||||

```yaml

|

||||

name: stablediffusion

|

||||

parameters:

|

||||

model: toonyou_beta6.safetensors

|

||||

backend: diffusers

|

||||

step: 30

|

||||

f16: true

|

||||

diffusers:

|

||||

pipeline_type: StableDiffusionPipeline

|

||||

cuda: true

|

||||

enable_parameters: "negative_prompt,num_inference_steps,clip_skip"

|

||||

scheduler_type: "k_dpmpp_sde"

|

||||

```

|

||||

|

||||

## CUDA(NVIDIA) acceleration

|

||||

|

||||

### Requirements

|

||||

|

||||

Requirement: nvidia-container-toolkit (installation instructions [1](https://www.server-world.info/en/note?os=Ubuntu_22.04&p=nvidia&f=2) [2](https://docs.nvidia.com/datacenter/cloud-native/container-toolkit/install-guide.html))

|

||||

|

||||

|

|

@ -74,37 +110,21 @@ llama_model_load_internal: total VRAM used: 1598 MB

|

|||

llama_init_from_file: kv self size = 512.00 MB

|

||||

```

|

||||

|

||||

#### Model configuration

|

||||

## Intel acceleration (sycl)

|

||||

|

||||

Depending on the model architecture and backend used, there might be different ways to enable GPU acceleration. It is required to configure the model you intend to use with a YAML config file. For example, for `llama.cpp` workloads a configuration file might look like this (where `gpu_layers` is the number of layers to offload to the GPU):

|

||||

#### Requirements

|

||||

|

||||

```yaml

|

||||

name: my-model-name

|

||||

# Default model parameters

|

||||

parameters:

|

||||

# Relative to the models path

|

||||

model: llama.cpp-model.ggmlv3.q5_K_M.bin

|

||||

Requirement: [Intel oneAPI Base Toolkit](https://software.intel.com/content/www/us/en/develop/tools/oneapi/base-toolkit/download.html)

|

||||

|

||||

context_size: 1024

|

||||

threads: 1

|

||||

To use SYCL, use the images with the `sycl-f16` or `sycl-f32` tag, for example `{{< version >}}-sycl-f32-core`, `{{< version >}}-sycl-f16-ffmpeg-core`, ...

|

||||

|

||||

f16: true # enable with GPU acceleration

|

||||

gpu_layers: 22 # GPU Layers (only used when built with cublas)

|

||||

The image list is on [quay](https://quay.io/repository/go-skynet/local-ai?tab=tags).

|

||||

|

||||

### Notes

|

||||

|

||||

In addition to the commands to run LocalAI normally, you need to specify `--device /dev/dri` to docker, for example:

|

||||

|

||||

```bash

|

||||

docker run --rm -ti --device /dev/dri -p 8080:8080 -e DEBUG=true -e MODELS_PATH=/models -e THREADS=1 -v $PWD/models:/models quay.io/go-skynet/local-ai:{{< version >}}-sycl-f16-ffmpeg-core

|

||||

```

|

||||

|

||||

For diffusers instead, it might look like this instead:

|

||||

|

||||

```yaml

|

||||

name: stablediffusion

|

||||

parameters:

|

||||

model: toonyou_beta6.safetensors

|

||||

backend: diffusers

|

||||

step: 30

|

||||

f16: true

|

||||

diffusers:

|

||||

pipeline_type: StableDiffusionPipeline

|

||||

cuda: true

|

||||

enable_parameters: "negative_prompt,num_inference_steps,clip_skip"

|

||||

scheduler_type: "k_dpmpp_sde"

|

||||

```

|

||||

|

|

@ -83,7 +83,7 @@ Here is the list of the variables available that can be used to customize the bu

|

|||

|

||||

| Variable | Default | Description |

|

||||

| ---------------------| ------- | ----------- |

|

||||

| `BUILD_TYPE` | None | Build type. Available: `cublas`, `openblas`, `clblas`, `metal`,`hipblas` |

|

||||

| `BUILD_TYPE` | None | Build type. Available: `cublas`, `openblas`, `clblas`, `metal`,`hipblas`, `sycl_f16`, `sycl_f32` |

|

||||

| `GO_TAGS` | `tts stablediffusion` | Go tags. Available: `stablediffusion`, `tts`, `tinydream` |

|

||||

| `CLBLAST_DIR` | | Specify a CLBlast directory |

|

||||

| `CUDA_LIBPATH` | | Specify a CUDA library path |

|

||||

|

|

@ -225,6 +225,17 @@ make BUILD_TYPE=clblas build

|

|||

|

||||

To specify a clblast dir set: `CLBLAST_DIR`

|

||||

|

||||

#### Intel GPU acceleration

|

||||

|

||||

Intel GPU acceleration is supported via SYCL.

|

||||

|

||||

Requirements: [Intel oneAPI Base Toolkit](https://www.intel.com/content/www/us/en/developer/tools/oneapi/base-toolkit-download.html) (see also [llama.cpp setup installations instructions](https://github.com/ggerganov/llama.cpp/blob/d71ac90985854b0905e1abba778e407e17f9f887/README-sycl.md?plain=1#L56))

|

||||

|

||||

```

|

||||

make BUILD_TYPE=sycl_f16 build # for float16

|

||||

make BUILD_TYPE=sycl_f32 build # for float32

|

||||

```

|

||||

|

||||

#### Metal (Apple Silicon)

|

||||

|

||||

```

|

||||

|

|

|

|||

|

|

@ -74,14 +74,6 @@ Note that this started just as a fun weekend project by [mudler](https://github.

|

|||

- 🖼️ [Download Models directly from Huggingface ](https://localai.io/models/)

|

||||

- 🆕 [Vision API](https://localai.io/features/gpt-vision/)

|

||||

|

||||

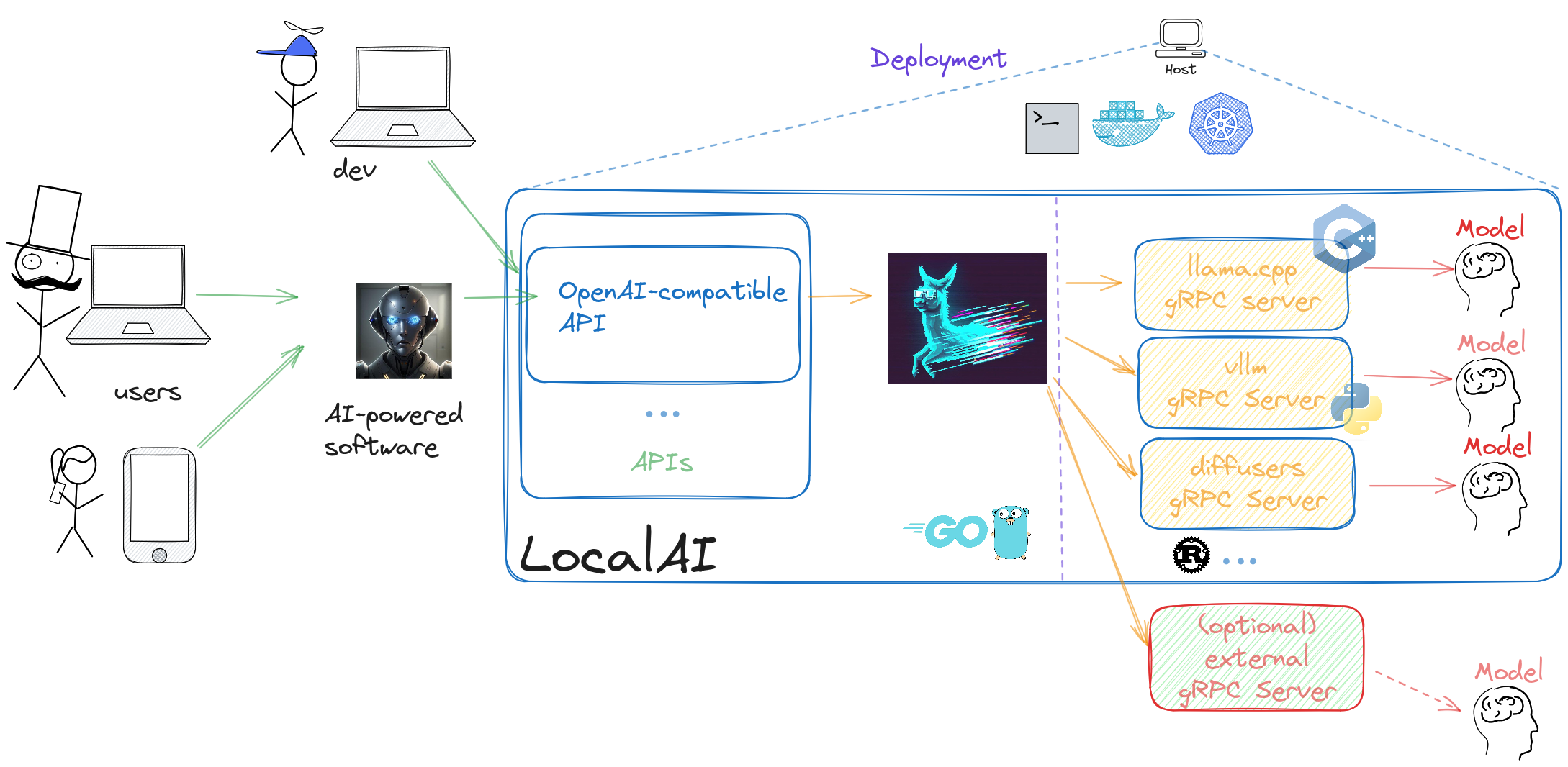

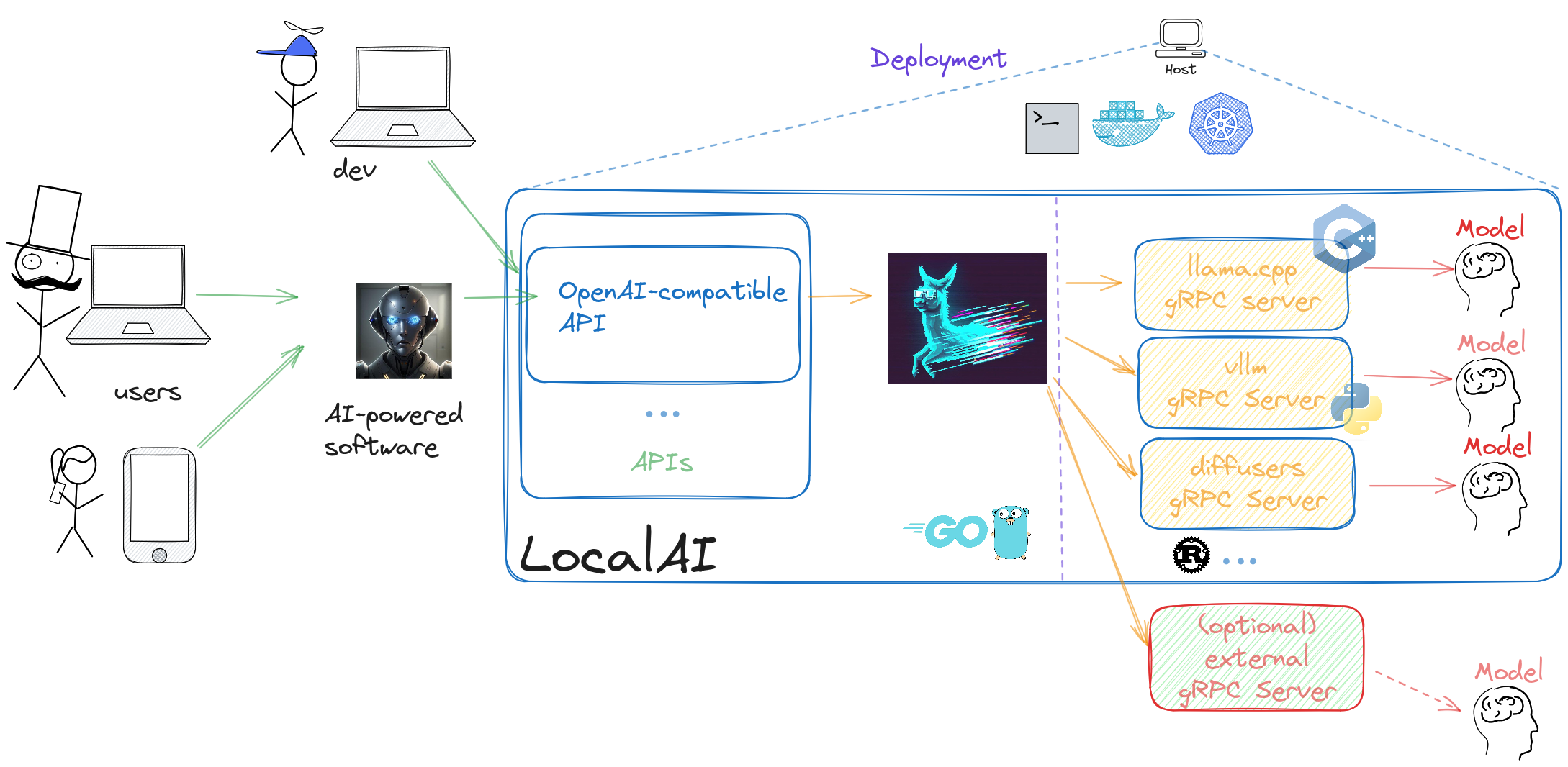

## How does it work?

|

||||

|

||||

LocalAI is an API written in Go that serves as an OpenAI shim, enabling software already developed with OpenAI SDKs to seamlessly integrate with LocalAI. It can be effortlessly implemented as a substitute, even on consumer-grade hardware. This capability is achieved by employing various C++ backends, including [ggml](https://github.com/ggerganov/ggml), to perform inference on LLMs using both CPU and, if desired, GPU. Internally LocalAI backends are just gRPC server, indeed you can specify and build your own gRPC server and extend LocalAI in runtime as well. It is possible to specify external gRPC server and/or binaries that LocalAI will manage internally.

|

||||

|

||||

LocalAI uses a mixture of backends written in various languages (C++, Golang, Python, ...). You can check [the model compatibility table]({{%relref "docs/reference/compatibility-table" %}}) to learn about all the components of LocalAI.

|

||||

|

||||

|

||||

|

||||

## Contribute and help

|

||||

|

||||

To help the project you can:

|

||||

|

|

@ -114,20 +106,6 @@ LocalAI couldn't have been built without the help of great software already avai

|

|||

- https://github.com/rhasspy/piper

|

||||

- https://github.com/cmp-nct/ggllm.cpp

|

||||

|

||||

|

||||

|

||||

## Backstory

|

||||

|

||||

As much as typical open source projects starts, I, [mudler](https://github.com/mudler/), was fiddling around with [llama.cpp](https://github.com/ggerganov/llama.cpp) over my long nights and wanted to have a way to call it from `go`, as I am a Golang developer and use it extensively. So I've created `LocalAI` (or what was initially known as `llama-cli`) and added an API to it.

|

||||

|

||||

But guess what? The more I dived into this rabbit hole, the more I realized that I had stumbled upon something big. With all the fantastic C++ projects floating around the community, it dawned on me that I could piece them together to create a full-fledged OpenAI replacement. So, ta-da! LocalAI was born, and it quickly overshadowed its humble origins.

|

||||

|

||||

Now, why did I choose to go with C++ bindings, you ask? Well, I wanted to keep LocalAI snappy and lightweight, allowing it to run like a champ on any system and avoid any Golang penalties of the GC, and, most importantly built on shoulders of giants like `llama.cpp`. Go is good at backends and API and is easy to maintain. And hey, don't forget that I'm all about sharing the love. That's why I made LocalAI MIT licensed, so everyone can hop on board and benefit from it.

|

||||

|

||||

As if that wasn't exciting enough, as the project gained traction, [mkellerman](https://github.com/mkellerman) and [Aisuko](https://github.com/Aisuko) jumped in to lend a hand. mkellerman helped set up some killer examples, while Aisuko is becoming our community maestro. The community now is growing even more with new contributors and users, and I couldn't be happier about it!

|

||||

|

||||

Oh, and let's not forget the real MVP here—[llama.cpp](https://github.com/ggerganov/llama.cpp). Without this extraordinary piece of software, LocalAI wouldn't even exist. So, a big shoutout to the community for making this magic happen!

|

||||

|

||||

## 🤗 Contributors

|

||||

|

||||

This is a community project, a special thanks to our contributors! 🤗

|

||||

|

|

|

|||

25

docs/content/docs/reference/architecture.md

Normal file

25

docs/content/docs/reference/architecture.md

Normal file

|

|

@ -0,0 +1,25 @@

|

|||

|

||||

+++

|

||||

disableToc = false

|

||||

title = "Architecture"

|

||||

weight = 25

|

||||

+++

|

||||

|

||||

LocalAI is an API written in Go that serves as an OpenAI shim, enabling software already developed with OpenAI SDKs to seamlessly integrate with LocalAI. It can be effortlessly implemented as a substitute, even on consumer-grade hardware. This capability is achieved by employing various C++ backends, including [ggml](https://github.com/ggerganov/ggml), to perform inference on LLMs using both CPU and, if desired, GPU. Internally LocalAI backends are just gRPC server, indeed you can specify and build your own gRPC server and extend LocalAI in runtime as well. It is possible to specify external gRPC server and/or binaries that LocalAI will manage internally.

|

||||

|

||||

LocalAI uses a mixture of backends written in various languages (C++, Golang, Python, ...). You can check [the model compatibility table]({{%relref "docs/reference/compatibility-table" %}}) to learn about all the components of LocalAI.

|

||||

|

||||

|

||||

|

||||

|

||||

## Backstory

|

||||

|

||||

As much as typical open source projects starts, I, [mudler](https://github.com/mudler/), was fiddling around with [llama.cpp](https://github.com/ggerganov/llama.cpp) over my long nights and wanted to have a way to call it from `go`, as I am a Golang developer and use it extensively. So I've created `LocalAI` (or what was initially known as `llama-cli`) and added an API to it.

|

||||

|

||||

But guess what? The more I dived into this rabbit hole, the more I realized that I had stumbled upon something big. With all the fantastic C++ projects floating around the community, it dawned on me that I could piece them together to create a full-fledged OpenAI replacement. So, ta-da! LocalAI was born, and it quickly overshadowed its humble origins.

|

||||

|

||||

Now, why did I choose to go with C++ bindings, you ask? Well, I wanted to keep LocalAI snappy and lightweight, allowing it to run like a champ on any system and avoid any Golang penalties of the GC, and, most importantly built on shoulders of giants like `llama.cpp`. Go is good at backends and API and is easy to maintain. And hey, don't forget that I'm all about sharing the love. That's why I made LocalAI MIT licensed, so everyone can hop on board and benefit from it.

|

||||

|

||||

As if that wasn't exciting enough, as the project gained traction, [mkellerman](https://github.com/mkellerman) and [Aisuko](https://github.com/Aisuko) jumped in to lend a hand. mkellerman helped set up some killer examples, while Aisuko is becoming our community maestro. The community now is growing even more with new contributors and users, and I couldn't be happier about it!

|

||||

|

||||

Oh, and let's not forget the real MVP here—[llama.cpp](https://github.com/ggerganov/llama.cpp). Without this extraordinary piece of software, LocalAI wouldn't even exist. So, a big shoutout to the community for making this magic happen!

|

||||

Loading…

Add table

Add a link

Reference in a new issue